Soft Correspondences in Multimodal Scene Parsing

Paper and Code

Sep 28, 2017

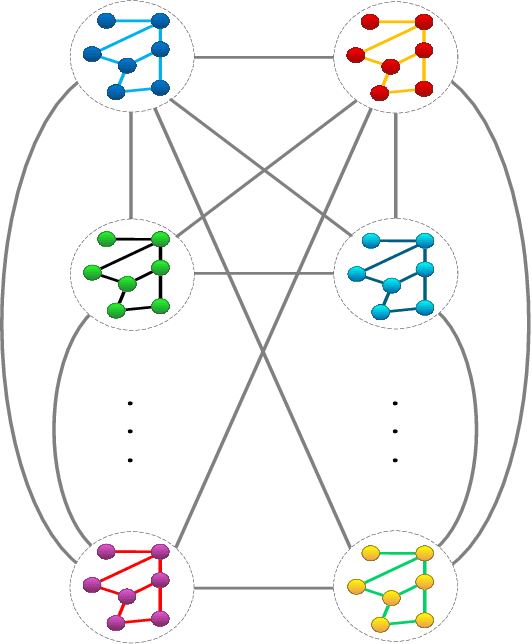

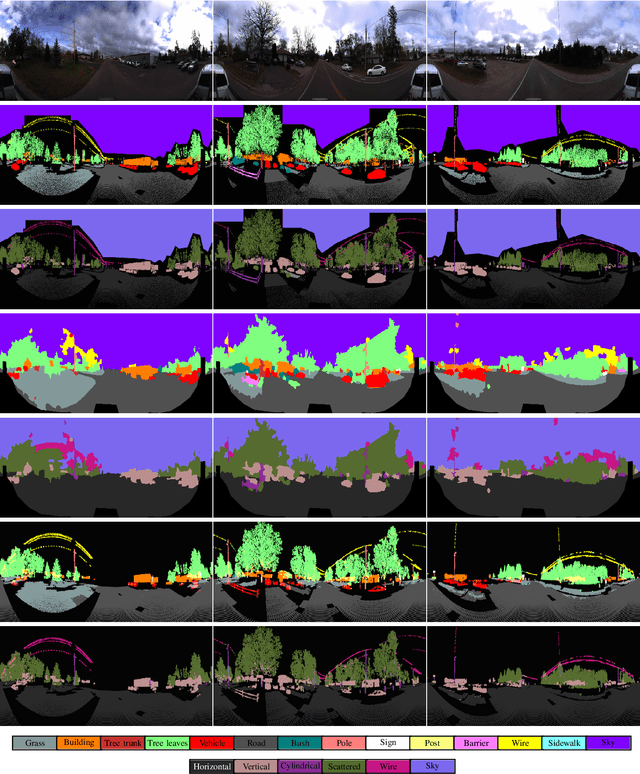

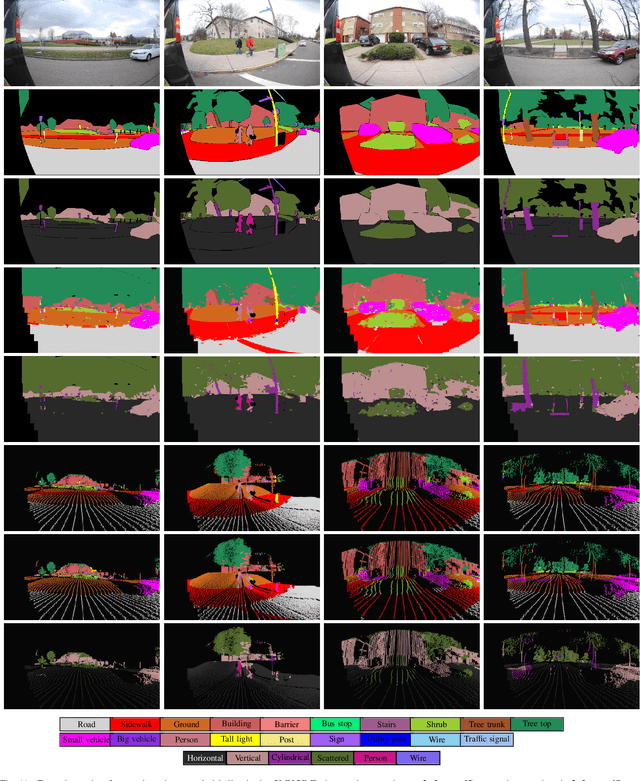

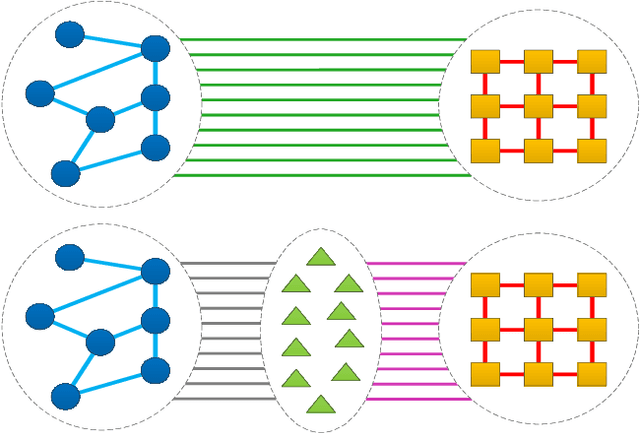

Exploiting multiple modalities for semantic scene parsing has been shown to improve accuracy over the singlemodality scenario. However multimodal datasets often suffer from problems such as data misalignment and label inconsistencies, where the existing methods assume that corresponding regions in two modalities must have identical labels. We propose to address this issue, by formulating multimodal semantic labeling as inference in a CRF and introducing latent nodes to explicitly model inconsistencies between two modalities. These latent nodes allow us not only to leverage information from both domains to improve their labeling, but also to cut the edges between inconsistent regions. We propose to learn intradomain and inter-domain potential functions from training data to avoid hand-tuning of the model parameters. We evaluate our approach on two publicly available datasets containing 2D and 3D data. Thanks to our latent nodes and our learning strategy, our method outperforms the state-of-the-art in both cases. Moreover, in order to highlight the benefits of the geometric information and the potential of our method in simultaneous 2D/3D semantic and geometric inference, we performed simultaneous inference of semantic and geometric classes both in 2D and 3D that led to satisfactory improvements of the labeling results in both datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge