SideInfNet: A Deep Neural Network for Semi-Automatic Semantic Segmentation with Side Information

Paper and Code

Mar 15, 2020

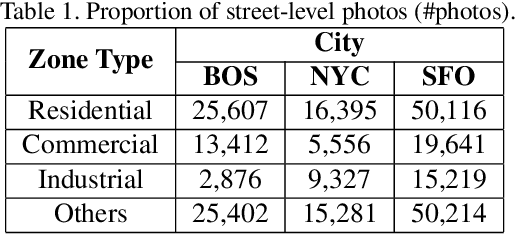

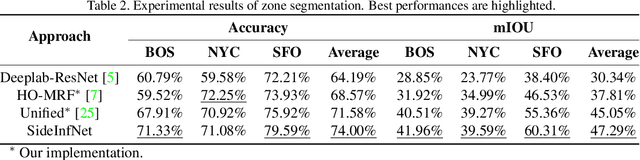

Fully-automatic execution is the ultimate goal for many Computer Vision applications. However, this objective is not always realistic in tasks associated with high failure costs, such as medical applications. For these tasks, a compromise between fully-automatic execution and user interactions is often preferred due to desirable accuracy and performance. Semi-automatic methods require minimal effort from experts by allowing them to provide cues that guide computer algorithms. Inspired by the practicality and applicability of the semi-automatic approach, this paper proposes a novel deep neural network architecture, namely SideInfNet that effectively integrates features learnt from images with side information extracted from user annotations to produce high quality semantic segmentation results. To evaluate our method, we applied the proposed network to three semantic segmentation tasks and conducted extensive experiments on benchmark datasets. Experimental results and comparison with prior work have verified the superiority of our model, suggesting the generality and effectiveness of the model in semi-automatic semantic segmentation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge