SCIM: Simultaneous Clustering, Inference, and Mapping for Open-World Semantic Scene Understanding

Paper and Code

Jun 21, 2022

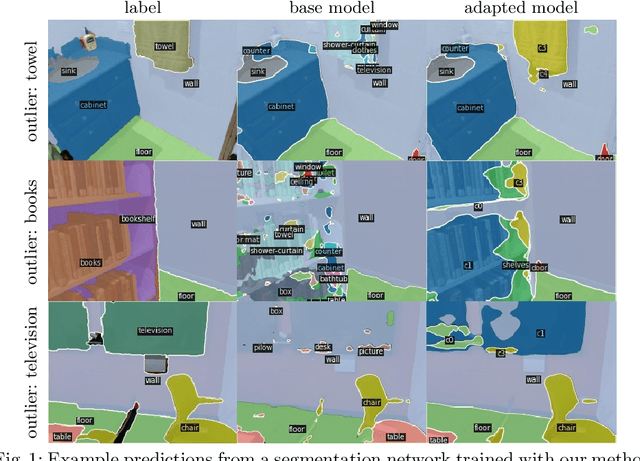

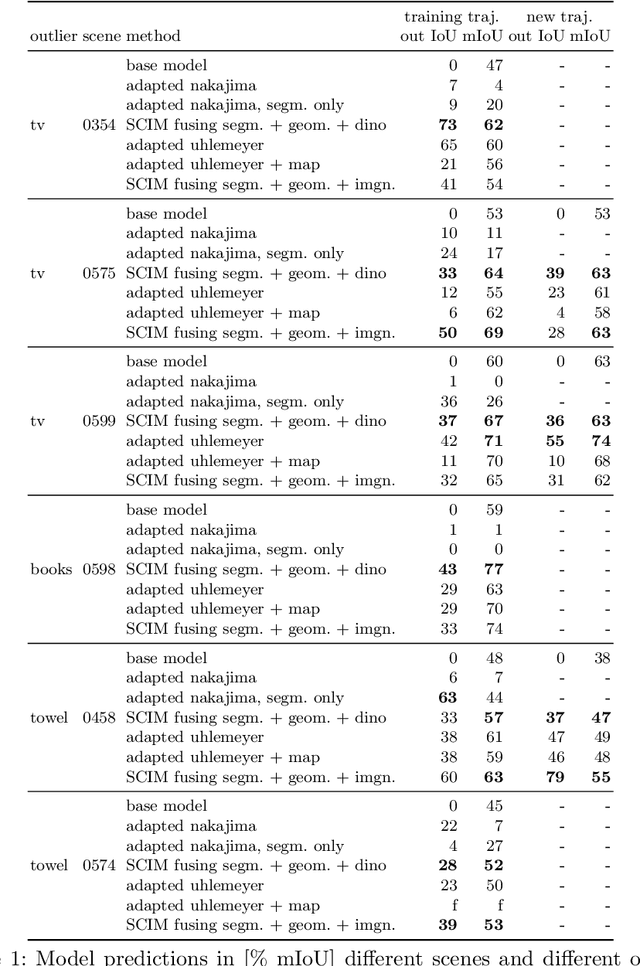

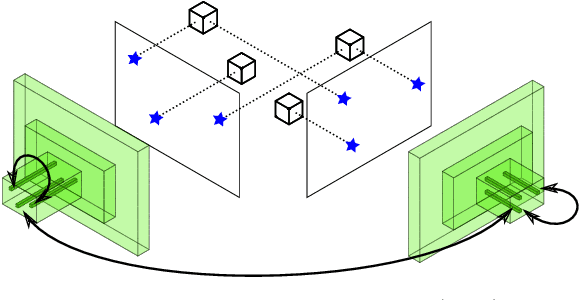

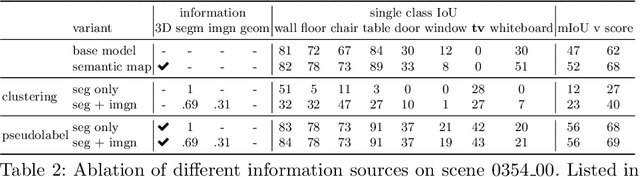

In order to operate in human environments, a robot's semantic perception has to overcome open-world challenges such as novel objects and domain gaps. Autonomous deployment to such environments therefore requires robots to update their knowledge and learn without supervision. We investigate how a robot can autonomously discover novel semantic classes and improve accuracy on known classes when exploring an unknown environment. To this end, we develop a general framework for mapping and clustering that we then use to generate a self-supervised learning signal to update a semantic segmentation model. In particular, we show how clustering parameters can be optimized during deployment and that fusion of multiple observation modalities improves novel object discovery compared to prior work.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge