Robust Visual Odometry Using Position-Aware Flow and Geometric Bundle Adjustment

Paper and Code

Nov 22, 2021

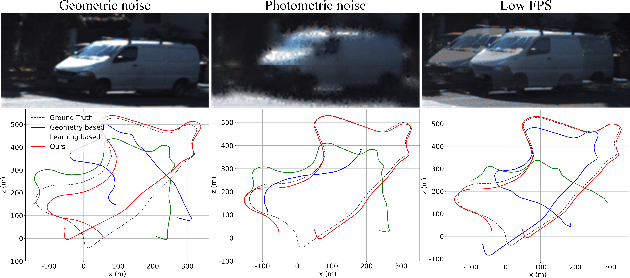

In this paper, an essential problem of robust visual odometry (VO) is approached by incorporating geometry-based methods into deep-learning architecture in a self-supervised manner. Generally, pure geometry-based algorithms are not as robust as deep learning in feature-point extraction and matching, but perform well in ego-motion estimation because of their well-established geometric theory. In this work, a novel optical flow network (PANet) built on a position-aware mechanism is proposed first. Then, a novel system that jointly estimates depth, optical flow, and ego-motion without a typical network to learning ego-motion is proposed. The key component of the proposed system is an improved bundle adjustment module containing multiple sampling, initialization of ego-motion, dynamic damping factor adjustment, and Jacobi matrix weighting. In addition, a novel relative photometric loss function is advanced to improve the depth estimation accuracy. The experiments show that the proposed system not only outperforms other state-of-the-art methods in terms of depth, flow, and VO estimation among self-supervised learning-based methods on KITTI dataset, but also significantly improves robustness compared with geometry-based, learning-based and hybrid VO systems. Further experiments show that our model achieves outstanding generalization ability and performance in challenging indoor (TMU-RGBD) and outdoor (KAIST) scenes.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge