Robust Task-Oriented Dialogue Generation with Contrastive Pre-training and Adversarial Filtering

Paper and Code

May 20, 2022

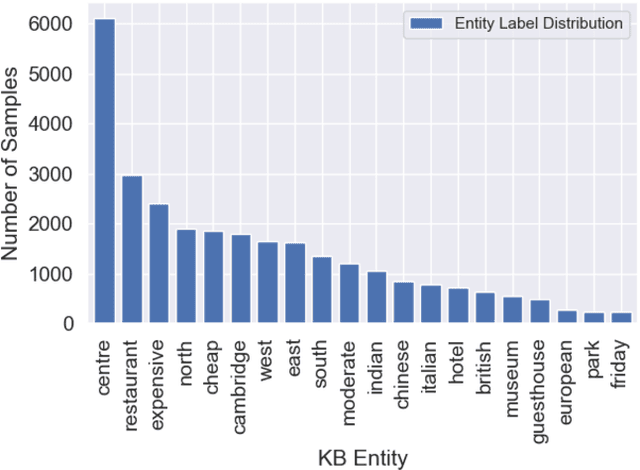

Data artifacts incentivize machine learning models to learn non-transferable generalizations by taking advantage of shortcuts in the data, and there is growing evidence that data artifacts play a role for the strong results that deep learning models achieve in recent natural language processing benchmarks. In this paper, we focus on task-oriented dialogue and investigate whether popular datasets such as MultiWOZ contain such data artifacts. We found that by only keeping frequent phrases in the training examples, state-of-the-art models perform similarly compared to the variant trained with full data, suggesting they exploit these spurious correlations to solve the task. Motivated by this, we propose a contrastive learning based framework to encourage the model to ignore these cues and focus on learning generalisable patterns. We also experiment with adversarial filtering to remove "easy" training instances so that the model would focus on learning from the "harder" instances. We conduct a number of generalization experiments -- e.g., cross-domain/dataset and adversarial tests -- to assess the robustness of our approach and found that it works exceptionally well.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge