Robust Performance-driven 3D Face Tracking in Long Range Depth Scenes

Paper and Code

Jul 10, 2015

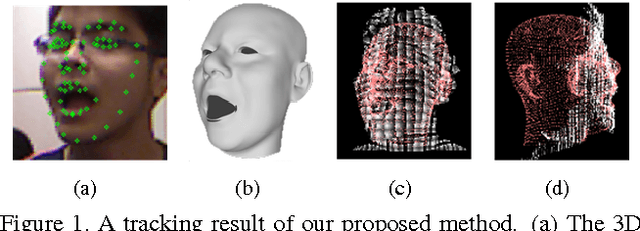

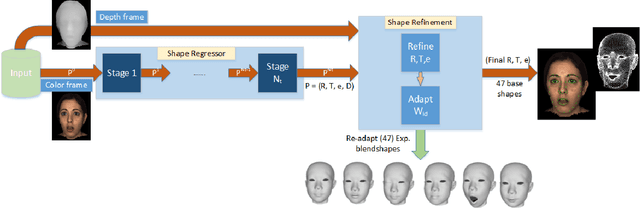

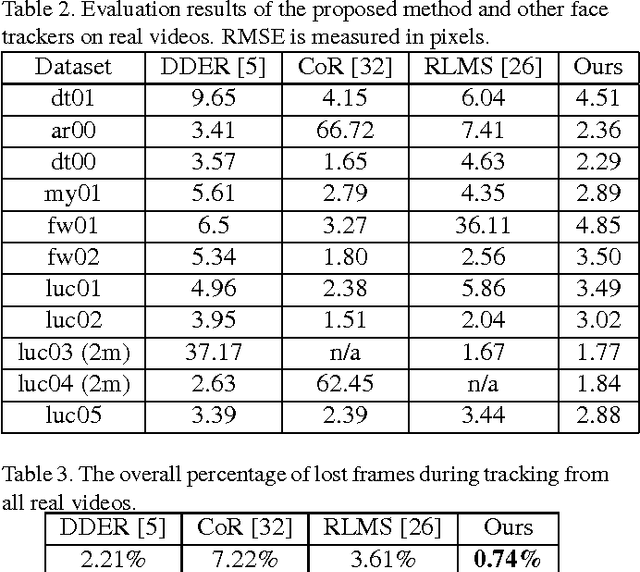

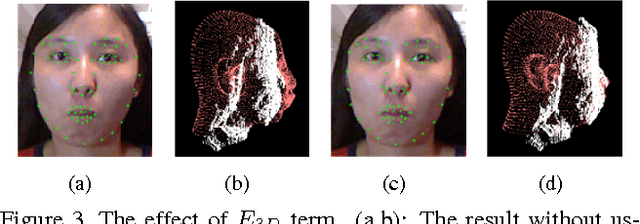

We introduce a novel robust hybrid 3D face tracking framework from RGBD video streams, which is capable of tracking head pose and facial actions without pre-calibration or intervention from a user. In particular, we emphasize on improving the tracking performance in instances where the tracked subject is at a large distance from the cameras, and the quality of point cloud deteriorates severely. This is accomplished by the combination of a flexible 3D shape regressor and the joint 2D+3D optimization on shape parameters. Our approach fits facial blendshapes to the point cloud of the human head, while being driven by an efficient and rapid 3D shape regressor trained on generic RGB datasets. As an on-line tracking system, the identity of the unknown user is adapted on-the-fly resulting in improved 3D model reconstruction and consequently better tracking performance. The result is a robust RGBD face tracker, capable of handling a wide range of target scene depths, beyond those that can be afforded by traditional depth or RGB face trackers. Lastly, since the blendshape is not able to accurately recover the real facial shape, we use the tracked 3D face model as a prior in a novel filtering process to further refine the depth map for use in other tasks, such as 3D reconstruction.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge