Robust, Occlusion-aware Pose Estimation for Objects Grasped by Adaptive Hands

Paper and Code

Mar 07, 2020

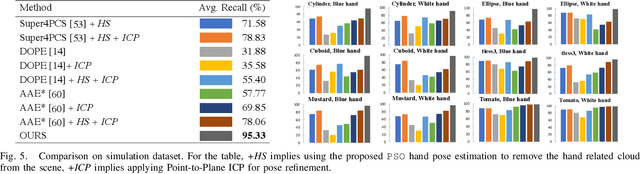

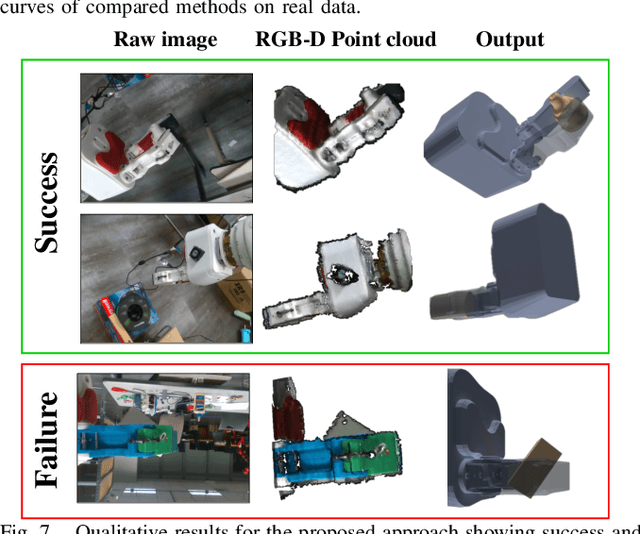

Many manipulation tasks, such as placement or within-hand manipulation, require the object's pose relative to a robot hand. The task is difficult when the hand significantly occludes the object. It is especially hard for adaptive hands, for which it is not easy to detect the finger's configuration. In addition, RGB-only approaches face issues with texture-less objects or when the hand and the object look similar. This paper presents a depth-based framework, which aims for robust pose estimation and short response times. The approach detects the adaptive hand's state via efficient parallel search given the highest overlap between the hand's model and the point cloud. The hand's point cloud is pruned and robust global registration is performed to generate object pose hypotheses, which are clustered. False hypotheses are pruned via physical reasoning. The remaining poses' quality is evaluated given agreement with observed data. Extensive evaluation on synthetic and real data demonstrates the accuracy and computational efficiency of the framework when applied on challenging, highly-occluded scenarios for different object types. An ablation study identifies how the framework's components help in performance. This work also provides a dataset for in-hand 6D object pose estimation. Code and dataset are available at: https://github.com/wenbowen123/icra20-hand-object-pose

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge