Robust Estimation of Tree Structured Markov Random Fields

Paper and Code

Feb 22, 2021

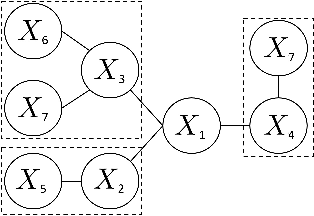

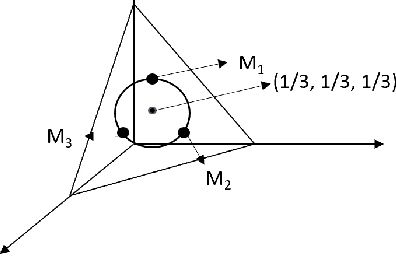

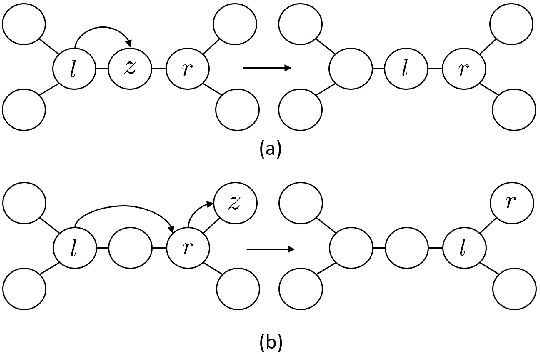

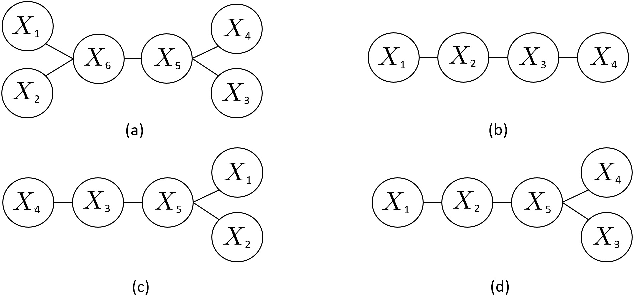

We study the problem of learning tree-structured Markov random fields (MRF) on discrete random variables with common support when the observations are corrupted by unknown noise. As the presence of noise in the observations obfuscates the original tree structure, the extent of recoverability of the tree-structured MRFs under noisy observations is brought into question. We show that in a general noise model, the underlying tree structure can be recovered only up to an equivalence class where each of the leaf nodes is indistinguishable from its parent and siblings, forming a leaf cluster. As the indistinguishability arises due to contrived noise models, we study the natural k-ary symmetric channel noise model where the value of each node is changed to a uniform value in the support with an unequal and unknown probability. Here, the answer becomes much more nuanced. We show that with a support size of 2, and the binary symmetric channel noise model, the leaf clusters remain indistinguishable. From support size 3 and up, the recoverability of a leaf cluster is dictated by the joint probability mass function of the nodes within it. We provide a precise characterization of recoverability by deriving a necessary and sufficient condition for the recoverability of a leaf cluster. We provide an algorithm that recovers the tree if this condition is satisfied, and recovers the tree up to the leaf clusters failing this condition.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge