Risk of Bias in Chest X-ray Foundation Models

Paper and Code

Sep 07, 2022

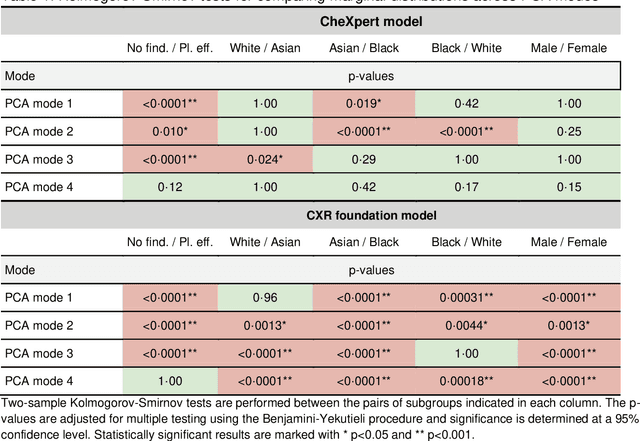

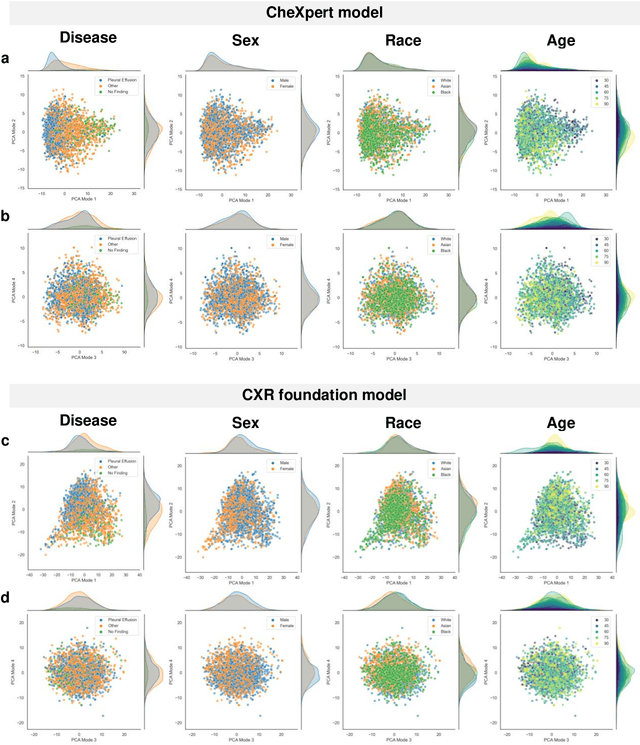

Foundation models are considered a breakthrough in all applications of AI, promising robust and reusable mechanisms for feature extraction, alleviating the need for large amounts of high quality training data for task-specific prediction models. However, foundation models may potentially encode and even reinforce existing biases present in historic datasets. Given the limited ability to scrutinize foundation models, it remains unclear whether the opportunities outweigh the risks in safety critical applications such as clinical decision making. In our statistical bias analysis of a recently published, and publicly available chest X-ray foundation model, we found reasons for concern as the model seems to encode protected characteristics including biological sex and racial identity, which may lead to disparate performance across subgroups in downstream applications. While research into foundation models for healthcare applications is in an early stage, we believe it is important to make the community aware of these risks to avoid harm.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge