RIFT2: Speeding-up RIFT with A New Rotation-Invariance Technique

Paper and Code

Mar 01, 2023

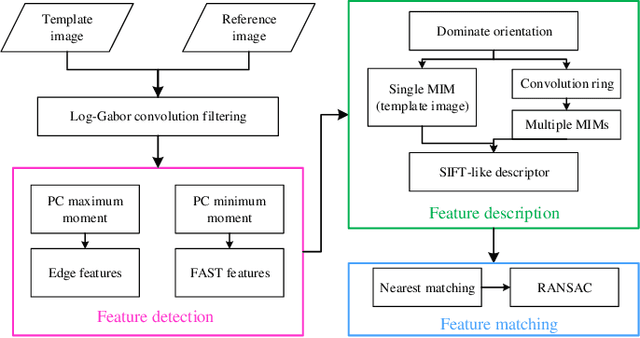

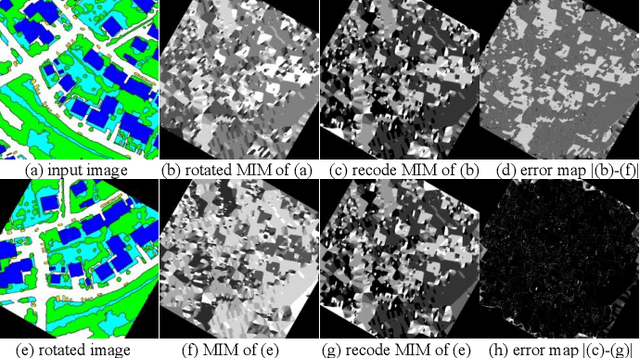

Multimodal image matching is an important prerequisite for multisource image information fusion. Compared with the traditional matching problem, multimodal feature matching is more challenging due to the severe nonlinear radiation distortion (NRD). Radiation-variation insensitive feature transform (RIFT)~\cite{li2019rift} has shown very good robustness to NRD and become a baseline method in multimodal feature matching. However, the high computational cost for rotation invariance largely limits its usage in practice. In this paper, we propose an improved RIFT method, called RIFT2. We develop a new rotation invariance technique based on dominant index value, which avoids the construction process of convolution sequence ring. Hence, it can speed up the running time and reduce the memory consumption of the original RIFT by almost 3 times in theory. Extensive experiments show that RIFT2 achieves similar matching performance to RIFT while being much faster and having less memory consumption. The source code will be made publicly available in \url{https://github.com/LJY-RS/RIFT2-multimodal-matching-rotation}

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge