RiddleSense: Answering Riddle Questions as Commonsense Reasoning

Paper and Code

Jan 02, 2021

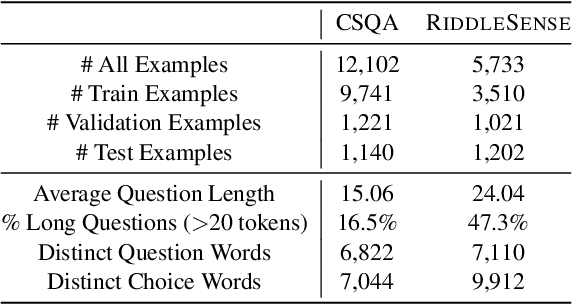

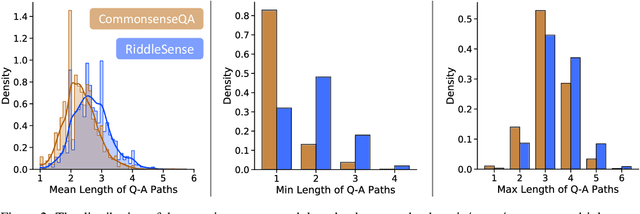

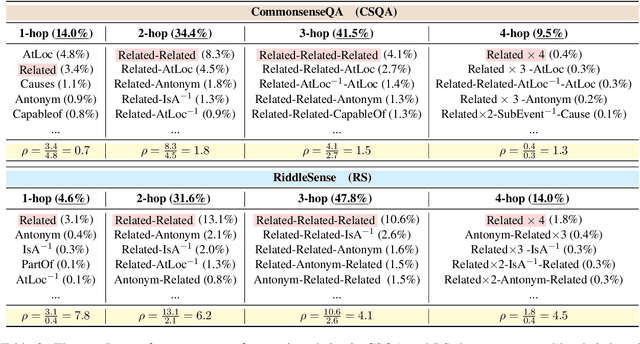

A riddle is a mystifying, puzzling question about everyday concepts. For example, the riddle "I have five fingers but I am not alive. What am I?" asks about the concept of a glove. Solving riddles is a challenging cognitive process for humans, in that it requires complex commonsense reasoning abilities and an understanding of figurative language. However, there are currently no commonsense reasoning datasets that test these abilities. We propose RiddleSense, a novel multiple-choice question answering challenge for benchmarking higher-order commonsense reasoning models, which is the first large dataset for riddle-style commonsense question answering, where the distractors are crowdsourced from human annotators. We systematically evaluate a wide range of reasoning models over it and point out that there is a large gap between the best-supervised model and human performance -- pointing to interesting future research for higher-order commonsense reasoning and computational creativity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge