Regularizing Neural Networks via Stochastic Branch Layers

Paper and Code

Oct 03, 2019

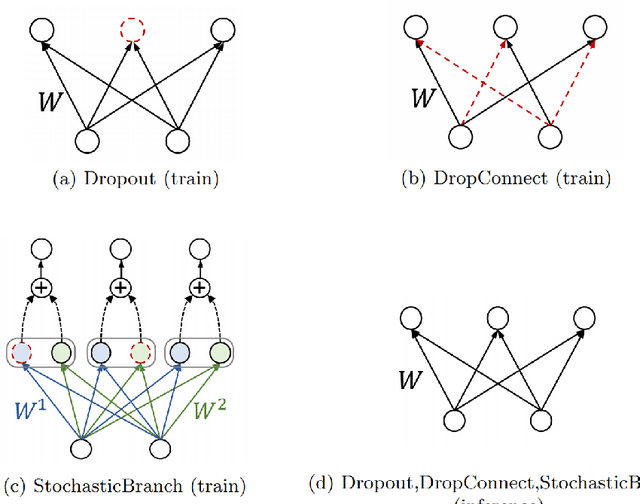

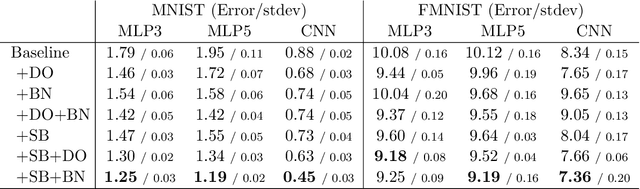

We introduce a novel stochastic regularization technique for deep neural networks, which decomposes a layer into multiple branches with different parameters and merges stochastically sampled combinations of the outputs from the branches during training. Since the factorized branches can collapse into a single branch through a linear operation, inference requires no additional complexity compared to the ordinary layers. The proposed regularization method, referred to as StochasticBranch, is applicable to any linear layers such as fully-connected or convolution layers. The proposed regularizer allows the model to explore diverse regions of the model parameter space via multiple combinations of branches to find better local minima. An extensive set of experiments shows that our method effectively regularizes networks and further improves the generalization performance when used together with other existing regularization techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge