Recognizing Plans by Learning Embeddings from Observed Action Distributions

Paper and Code

Dec 05, 2017

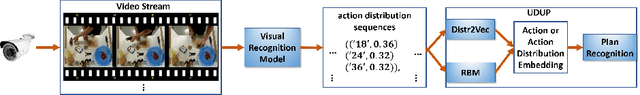

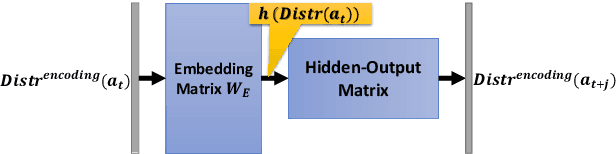

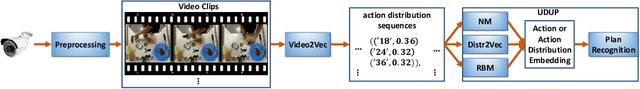

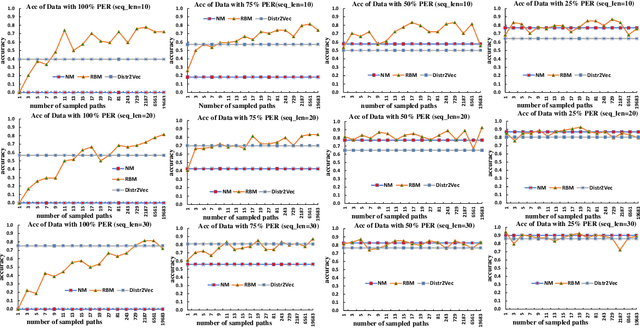

Recent advances in visual activity recognition have raised the possibility of applications such as automated video surveillance. Effective approaches for such problems however require the ability to recognize the plans of the agents from video information. Although traditional plan recognition algorithms depend on access to sophisticated domain models, one recent promising direction involves learning shallow models directly from the observed activity sequences, and using them to recognize/predict plans. One limitation of such approaches is that they expect observed action sequences as training data. In many cases involving vision or sensing from raw data, there is considerably uncertainty about the specific action at any given time point. The most we can expect in such cases is probabilistic information about the action at that point. The training data will then be sequences of such observed action distributions. In this paper, we focus on doing effective plan recognition with such uncertain observations. Our contribution is a novel extension of word vector embedding techniques to directly handle such observation distributions as input. This involves computing embeddings by minimizing the distance between distributions (measured as KL-divergence). We will show that our approach has superior performance when the perception error rate (PER) is higher, and competitive performance when the PER is lower. We will also explore the possibility of using importance sampling techniques to handle observed action distributions with traditional word vector embeddings. We will show that although such approaches can give good recognition accuracy, they take significantly longer training time and their performance will degrade significantly at higher perception error rate.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge