Receding Horizon Control Based Online Motion Planning with Partially Infeasible LTL Specifications

Paper and Code

Jul 23, 2020

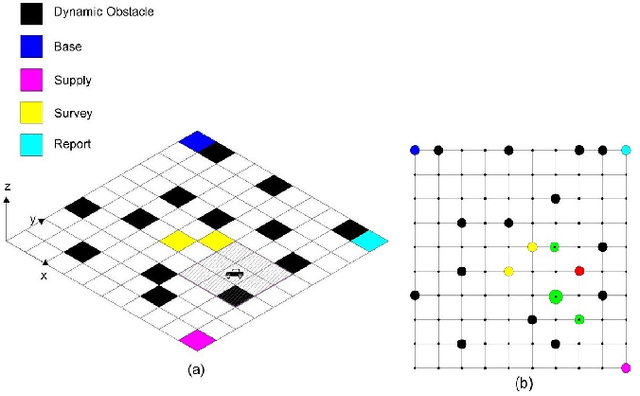

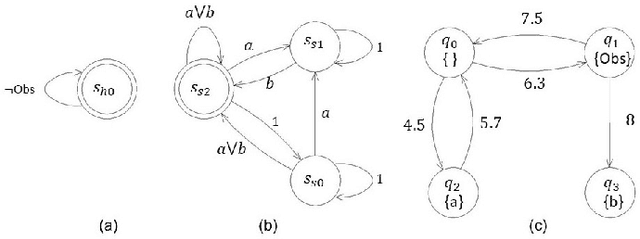

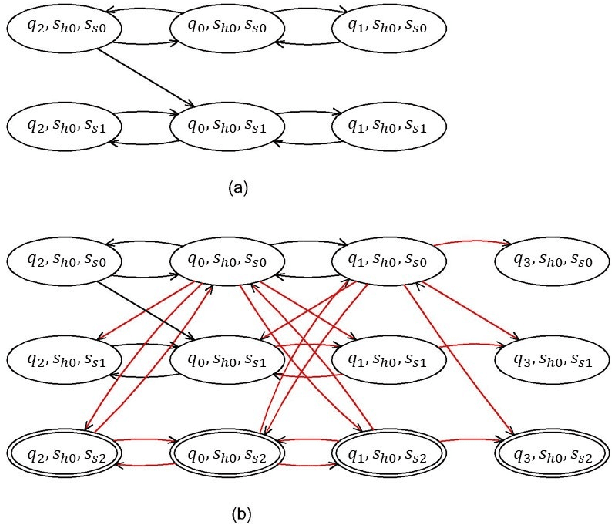

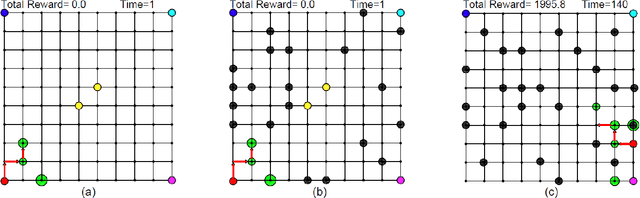

This work considers online optimal motion planning of an autonomous agent subject to linear temporal logic (LTL) constraints. The environment is dynamic in the sense of containing mobile obstacles and time-varying areas of interest (i.e., time-varying reward and workspace properties) to be visited by the agent. Since user-specified tasks may not be fully realized (i.e., partially infeasible), this work considers hard and soft LTL constraints, where hard constraints enforce safety requirement (e.g. avoid obstacles) while soft constraints represent tasks that can be relaxed to not strictly follow user specifications. The motion planning of the agent is to generate policies, in decreasing order of priority, to 1) formally guarantee the satisfaction of safety constraints; 2) mostly satisfy soft constraints (i.e., minimize the violation cost if desired tasks are partially infeasible); and 3) optimize the objective of rewards collection (i.e., visiting dynamic areas of more interests). To achieve these objectives, a relaxed product automaton, which allows the agent to not strictly follow the desired LTL constraints, is constructed. A utility function is developed to quantify the differences between the revised and the desired motion plan, and the accumulated rewards are designed to bias the motion plan towards those areas of more interests. Receding horizon control is synthesized with an LTL formula to maximize the accumulated utilities over a finite horizon, while ensuring that safety constraints are fully satisfied and soft constraints are mostly satisfied. Simulation and experiment results are provided to demonstrate the effectiveness of the developed motion strategy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge