Ray-based framework for state identification in quantum dot devices

Paper and Code

Feb 23, 2021

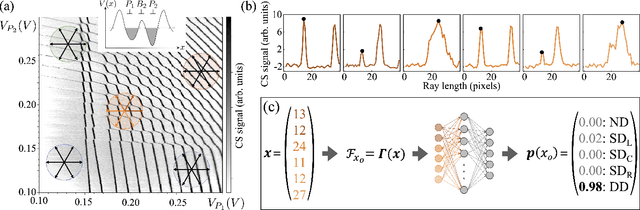

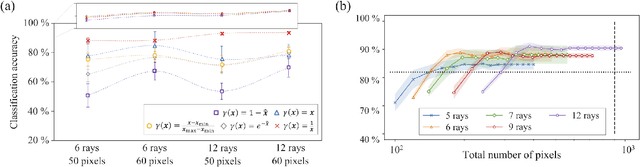

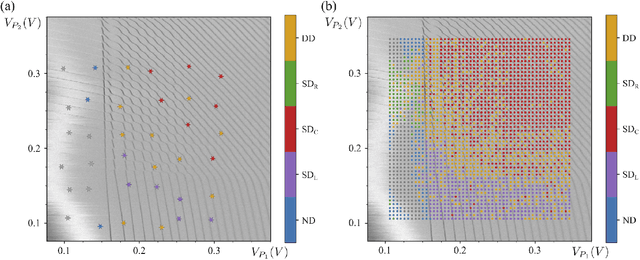

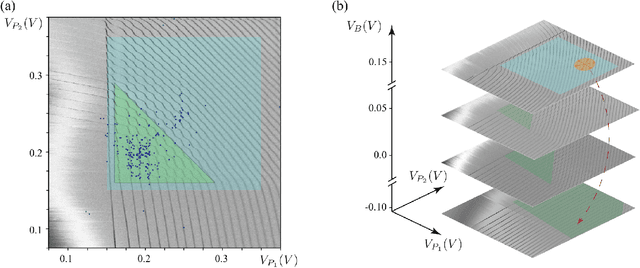

Quantum dots (QDs) defined with electrostatic gates are a leading platform for a scalable quantum computing implementation. However, with increasing numbers of qubits, the complexity of the control parameter space also grows. Traditional measurement techniques, relying on complete or near-complete exploration via two-parameter scans (images) of the device response, quickly become impractical with increasing numbers of gates. Here, we propose to circumvent this challenge by introducing a measurement technique relying on one-dimensional projections of the device response in the multi-dimensional parameter space. Dubbed as the ray-based classification (RBC) framework, we use this machine learning (ML) approach to implement a classifier for QD states, enabling automated recognition of qubit-relevant parameter regimes. We show that RBC surpasses the 82 % accuracy benchmark from the experimental implementation of image-based classification techniques from prior work while cutting down the number of measurement points needed by up to 70 %. The reduction in measurement cost is a significant gain for time-intensive QD measurements and is a step forward towards the scalability of these devices. We also discuss how the RBC-based optimizer, which tunes the device to a multi-qubit regime, performs when tuning in the two- and three-dimensional parameter spaces defined by plunger and barrier gates that control the dots. This work provides experimental validation of both efficient state identification and optimization with ML techniques for non-traditional measurements in quantum systems with high-dimensional parameter spaces and time-intensive measurements.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge