RAUM-VO: Rotational Adjusted Unsupervised Monocular Visual Odometry

Paper and Code

Mar 14, 2022

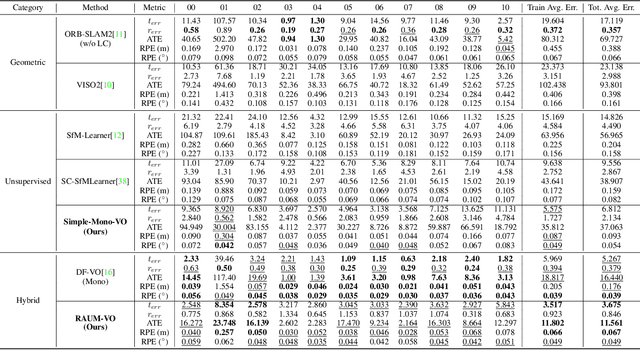

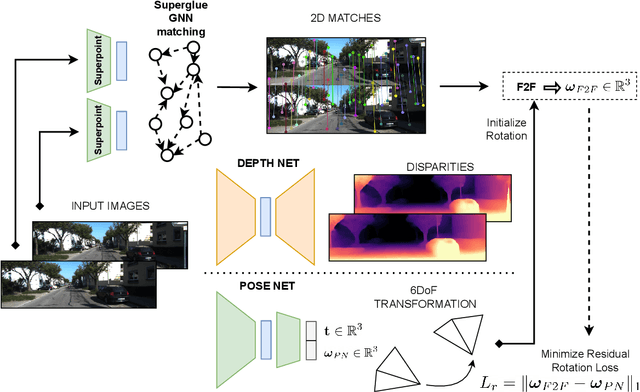

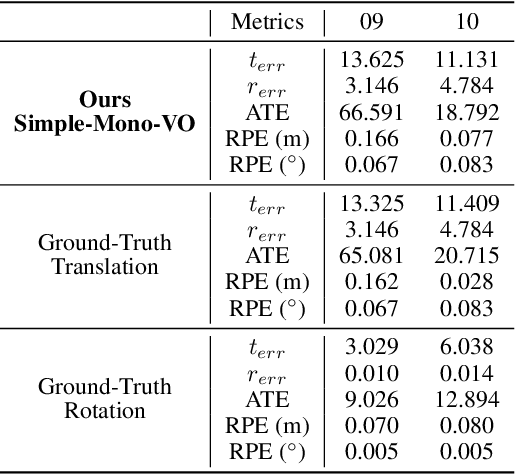

Unsupervised learning for monocular camera motion and 3D scene understanding has gained popularity over traditional methods, relying on epipolar geometry or non-linear optimization. Notably, deep learning can overcome many issues of monocular vision, such as perceptual aliasing, low-textured areas, scale-drift, and degenerate motions. Also, concerning supervised learning, we can fully leverage video streams data without the need for depth or motion labels. However, in this work, we note that rotational motion can limit the accuracy of the unsupervised pose networks more than the translational component. Therefore, we present RAUM-VO, an approach based on a model-free epipolar constraint for frame-to-frame motion estimation (F2F) to adjust the rotation during training and online inference. To this end, we match 2D keypoints between consecutive frames using pre-trained deep networks, Superpoint and Superglue, while training a network for depth and pose estimation using an unsupervised training protocol. Then, we adjust the predicted rotation with the motion estimated by F2F using the 2D matches and initializing the solver with the pose network prediction. Ultimately, RAUM-VO shows a considerable accuracy improvement compared to other unsupervised pose networks on the KITTI dataset while reducing the complexity of other hybrid or traditional approaches and achieving comparable state-of-the-art results.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge