Prototypical Classifier for Robust Class-Imbalanced Learning

Paper and Code

Oct 22, 2021

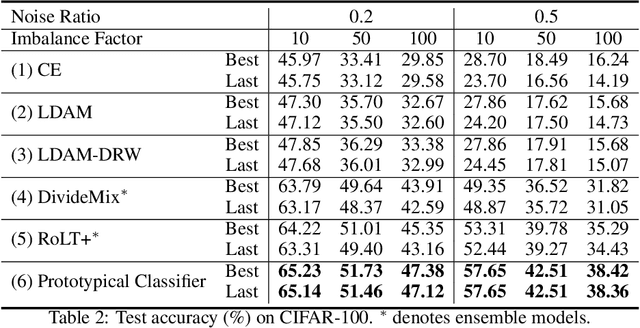

Deep neural networks have been shown to be very powerful methods for many supervised learning tasks. However, they can also easily overfit to training set biases, i.e., label noise and class imbalance. While both learning with noisy labels and class-imbalanced learning have received tremendous attention, existing works mainly focus on one of these two training set biases. To fill the gap, we propose \textit{Prototypical Classifier}, which does not require fitting additional parameters given the embedding network. Unlike conventional classifiers that are biased towards head classes, Prototypical Classifier produces balanced and comparable predictions for all classes even though the training set is class-imbalanced. By leveraging this appealing property, we can easily detect noisy labels by thresholding the confidence scores predicted by Prototypical Classifier, where the threshold is dynamically adjusted through the iteration. A sample reweghting strategy is then applied to mitigate the influence of noisy labels. We test our method on CIFAR-10-LT, CIFAR-100-LT and Webvision datasets, observing that Prototypical Classifier obtains substaintial improvements compared with state of the arts.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge