Programmable Neural Network Trojan for Pre-Trained Feature Extractor

Paper and Code

Jan 23, 2019

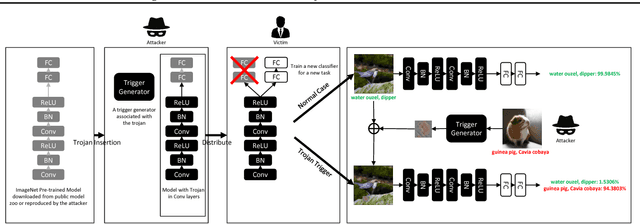

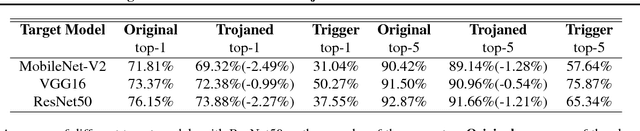

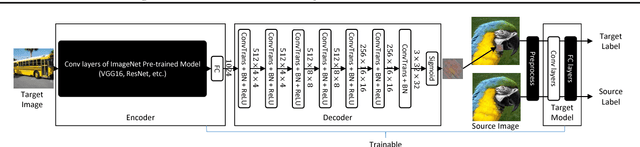

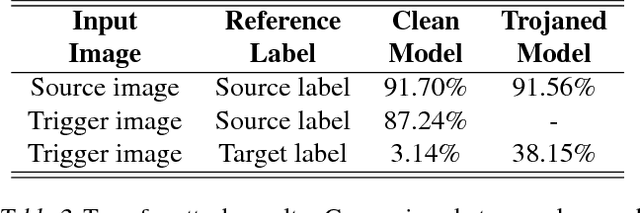

Neural network (NN) trojaning attack is an emerging and important attack model that can broadly damage the system deployed with NN models. Existing studies have explored the outsourced training attack scenario and transfer learning attack scenario in some small datasets for specific domains, with limited numbers of fixed target classes. In this paper, we propose a more powerful trojaning attack method for both outsourced training attack and transfer learning attack, which outperforms existing studies in the capability, generality, and stealthiness. First, The attack is programmable that the malicious misclassification target is not fixed and can be generated on demand even after the victim's deployment. Second, our trojan attack is not limited in a small domain; one trojaned model on a large-scale dataset can affect applications of different domains that reuse its general features. Thirdly, our trojan design is hard to be detected or eliminated even if the victims fine-tune the whole model.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge