Privacy-Preserving Collaborative Deep Learning with Irregular Participants

Paper and Code

Dec 25, 2018

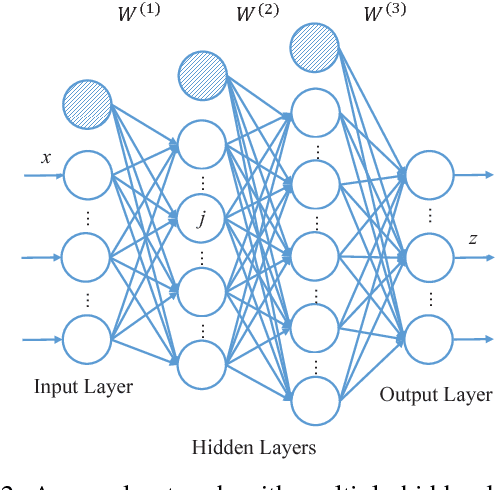

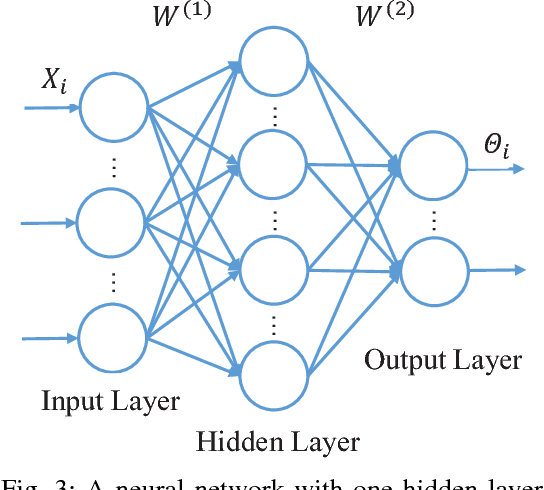

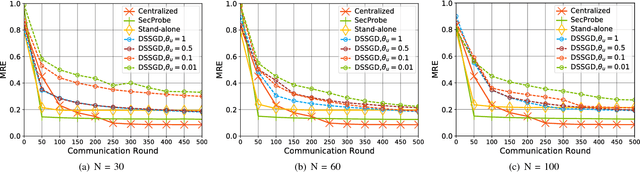

With large amounts of data collected from massive sensors, mobile users and institutions becomes widely available, neural network based deep learning is becoming increasingly popular and making great success in many application scenarios, such as image detection, speech recognition and machine translation. While deep learning can provide various benefits, the data for training usually contains highly sensitive information, e.g., personal medical records, and a central location for saving the data may pose a considerable threat to user privacy. In this paper, we present a practical privacy-preserving collaborative deep learning system that allows users (i.e., participants) to cooperatively build a collective deep learning model with data of all participants, without direct data sharing and central data storage. In our system, each participant trains a local model with their own data and only shares model parameters with the others. To further avoid potential privacy leakage from sharing model parameters, we use functional mechanism to perturb the objective function of the neural network in the training process to achieve $\epsilon$-differential privacy. In particular, for the first time, we consider the possibility that the data of certain participants may be of low quality (called \textit{irregular participants}), and propose a solution to reduce the impact of these participants while protecting their privacy. We evaluate the performance of our system on two well-known real-world data sets for regression and classification tasks. The results demonstrate that our system is robust to irregular participants, and can achieve high accuracy close to the centralized model while ensuring rigorous privacy protection.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge