Predictive Edge Caching through Deep Mining of Sequential Patterns in User Content Retrievals

Paper and Code

Oct 06, 2022

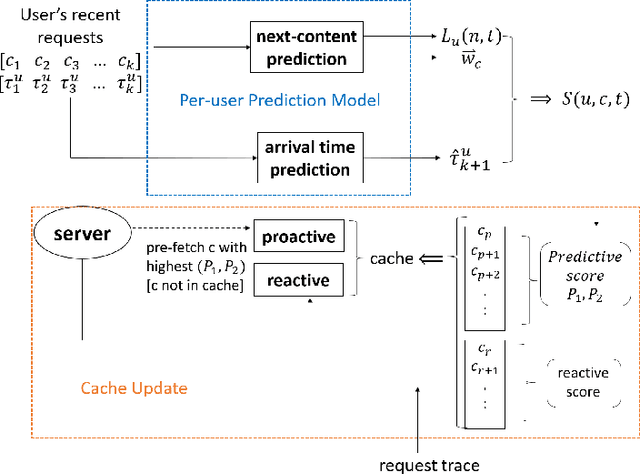

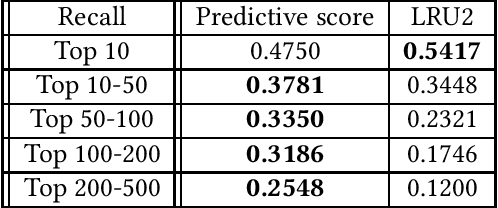

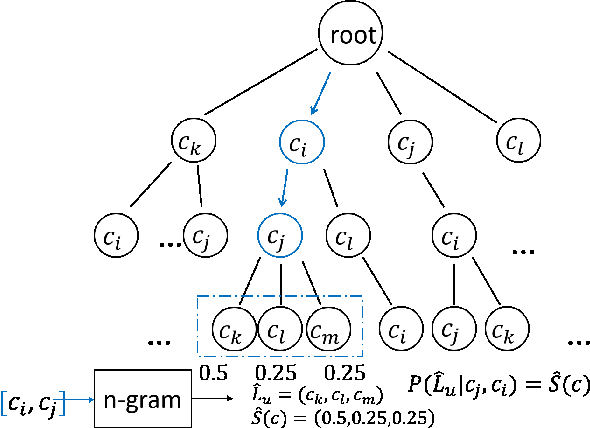

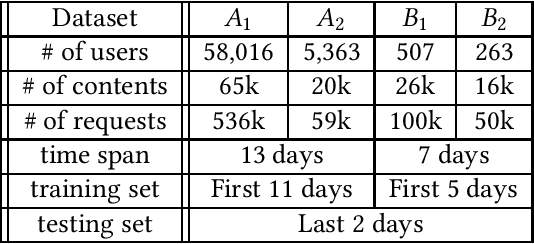

Edge caching plays an increasingly important role in boosting user content retrieval performance while reducing redundant network traffic. The effectiveness of caching ultimately hinges on the accuracy of predicting content popularity in the near future. However, at the network edge, content popularity can be extremely dynamic due to diverse user content retrieval behaviors and the low-degree of user multiplexing. It's challenging for the traditional reactive caching systems to keep up with the dynamic content popularity patterns. In this paper, we propose a novel Predictive Edge Caching (PEC) system that predicts the future content popularity using fine-grained learning models that mine sequential patterns in user content retrieval behaviors, and opportunistically prefetches contents predicted to be popular in the near future using idle network bandwidth. Through extensive experiments driven by real content retrieval traces, we demonstrate that PEC can adapt to highly dynamic content popularity, and significantly improve cache hit ratio and reduce user content retrieval latency over the state-of-art caching policies. More broadly, our study demonstrates that edge caching performance can be boosted by deep mining of user content retrieval behaviors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge