Point Cloud Compression with Implicit Neural Representations: A Unified Framework

Paper and Code

May 19, 2024

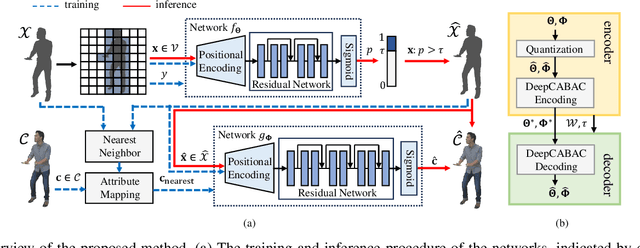

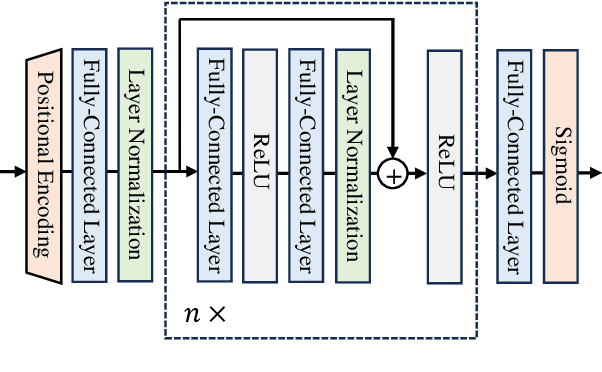

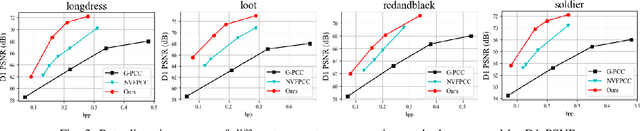

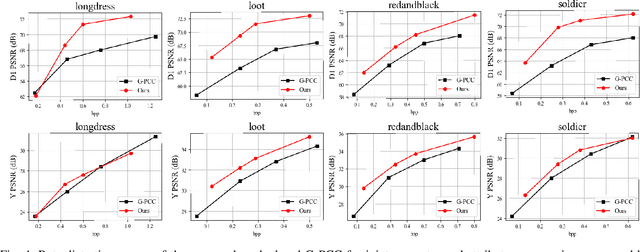

Point clouds have become increasingly vital across various applications thanks to their ability to realistically depict 3D objects and scenes. Nevertheless, effectively compressing unstructured, high-precision point cloud data remains a significant challenge. In this paper, we present a pioneering point cloud compression framework capable of handling both geometry and attribute components. Unlike traditional approaches and existing learning-based methods, our framework utilizes two coordinate-based neural networks to implicitly represent a voxelized point cloud. The first network generates the occupancy status of a voxel, while the second network determines the attributes of an occupied voxel. To tackle an immense number of voxels within the volumetric space, we partition the space into smaller cubes and focus solely on voxels within non-empty cubes. By feeding the coordinates of these voxels into the respective networks, we reconstruct the geometry and attribute components of the original point cloud. The neural network parameters are further quantized and compressed. Experimental results underscore the superior performance of our proposed method compared to the octree-based approach employed in the latest G-PCC standards. Moreover, our method exhibits high universality when contrasted with existing learning-based techniques.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge