OptiGAN: Generative Adversarial Networks for Goal Optimized Sequence Generation

Paper and Code

May 22, 2020

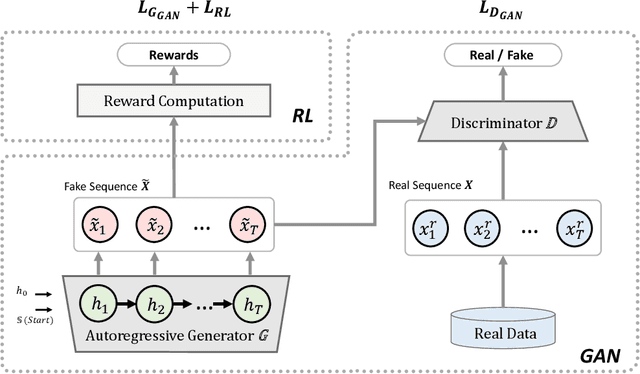

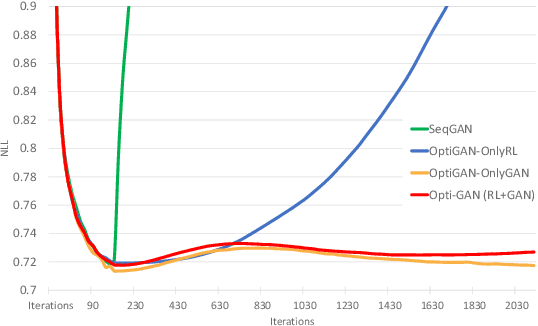

One of the challenging problems in sequence generation tasks is the optimized generation of sequences with specific desired goals. Current sequential generative models mainly generate sequences to closely mimic the training data, without direct optimization of desired goals or properties specific to the task. We introduce OptiGAN, a generative model that incorporates both Generative Adversarial Networks (GAN) and Reinforcement Learning (RL) to optimize desired goal scores using policy gradients. We apply our model to text and real-valued sequence generation, where our model is able to achieve higher desired scores out-performing GAN and RL baselines, while not sacrificing output sample diversity.

* Preprint for accepted conference paper at International Joint

Conference on Neural Networks (IJCNN) 2020

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge