On the Power of Abstention and Data-Driven Decision Making for Adversarial Robustness

Paper and Code

Oct 13, 2020

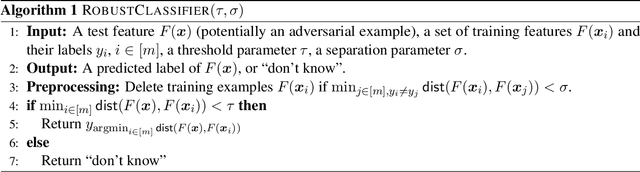

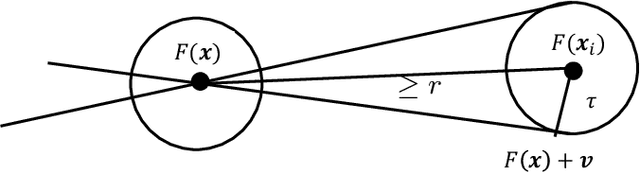

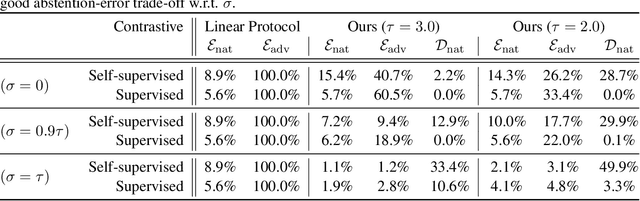

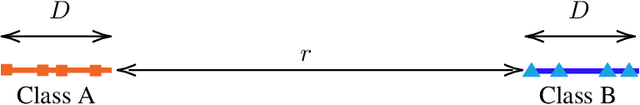

We prove that classifiers with the ability to abstain are provably more powerful than those that cannot against an adversary that can perturb datapoints by arbitrary amounts in random directions. Specifically, we show that no matter how well-behaved the natural data is, any classifier that cannot abstain will be defeated by such an adversary. However, by allowing abstention, we give a parameterized algorithm with provably good performance against such an adversary when classes are reasonably well-separated and the data dimension is high. We further use a data-driven method to set our algorithm parameters to optimize over the accuracy vs. abstention trade-off with strong theoretical guarantees. Our theory has direct applications to the technique of contrastive learning, where we empirically demonstrate the ability of our algorithms to obtain high robust accuracy with only small amounts of abstention in both supervised and self-supervised settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge