On the Design and Analysis of Multiple View Descriptors

Paper and Code

Nov 23, 2013

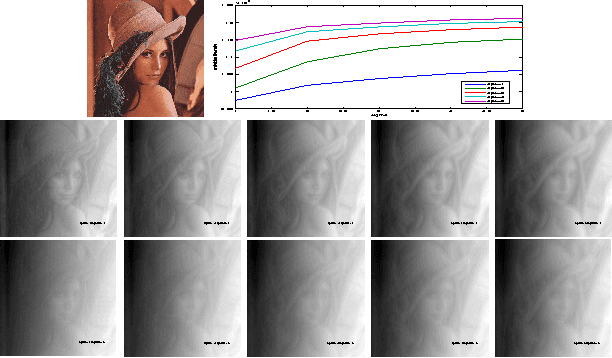

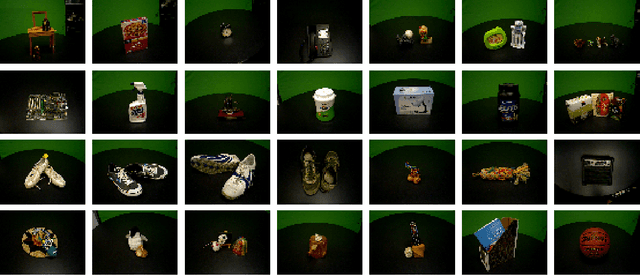

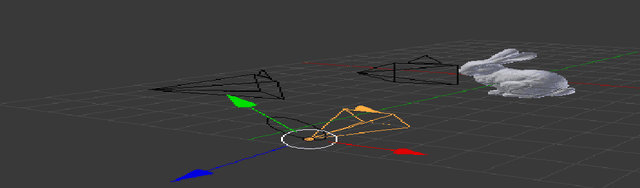

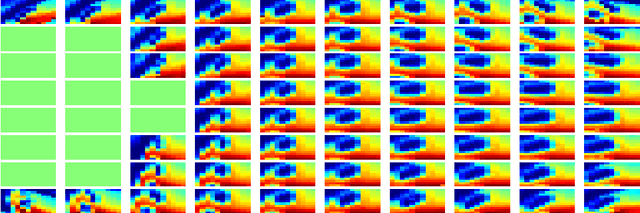

We propose an extension of popular descriptors based on gradient orientation histograms (HOG, computed in a single image) to multiple views. It hinges on interpreting HOG as a conditional density in the space of sampled images, where the effects of nuisance factors such as viewpoint and illumination are marginalized. However, such marginalization is performed with respect to a very coarse approximation of the underlying distribution. Our extension leverages on the fact that multiple views of the same scene allow separating intrinsic from nuisance variability, and thus afford better marginalization of the latter. The result is a descriptor that has the same complexity of single-view HOG, and can be compared in the same manner, but exploits multiple views to better trade off insensitivity to nuisance variability with specificity to intrinsic variability. We also introduce a novel multi-view wide-baseline matching dataset, consisting of a mixture of real and synthetic objects with ground truthed camera motion and dense three-dimensional geometry.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge