On the Anatomy of MCMC-based Maximum Likelihood Learning of Energy-Based Models

Paper and Code

Apr 11, 2019

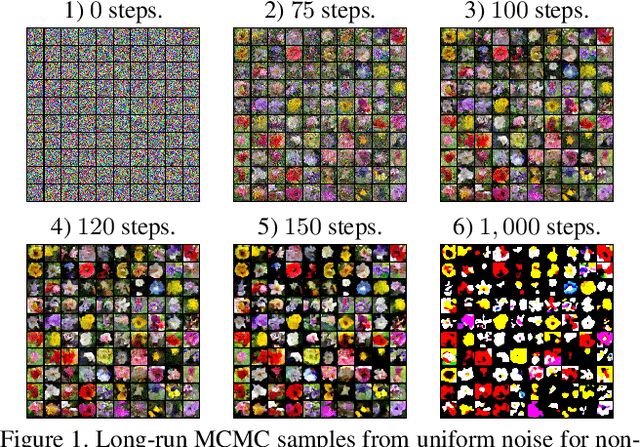

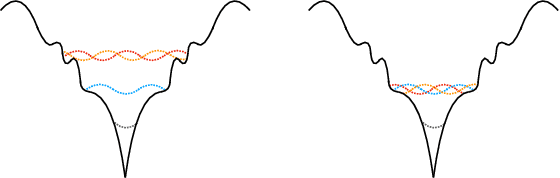

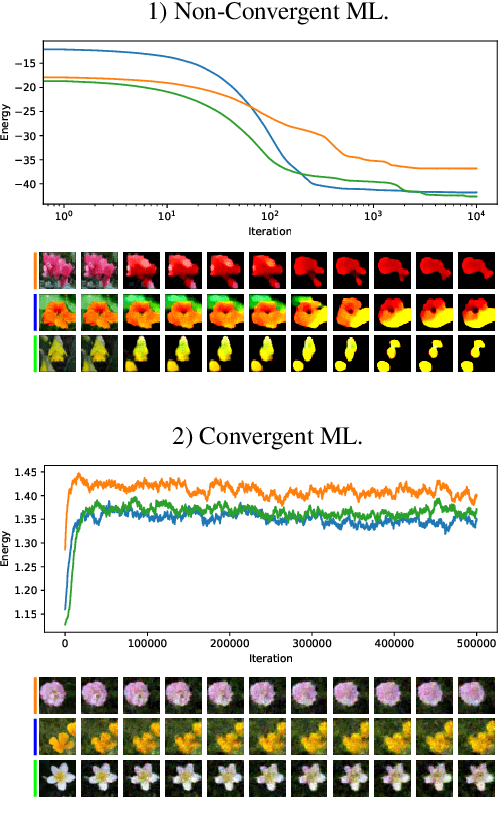

This study investigates the effects of Markov Chain Monte Carlo (MCMC) sampling in unsupervised Maximum Likelihood (ML) learning. Our attention is restricted to the family of unnormalized probability densities for which the negative log density (or energy function) is a ConvNet. In general, we find that many of the techniques used to stabilize training in previous studies can have the opposite effect. Stable ML learning with a ConvNet potential can be achieved with only a few hyper-parameters and no regularization. Using this minimal framework, we identify a variety of ML learning outcomes that depend on the implementation of MCMC sampling. On one hand, we show that it is easy to train an energy-based model which can sample realistic images with short-run Langevin. ML can be effective and stable even when MCMC samples have much higher energy than true steady-state samples throughout training. Based on this insight, we introduce an ML method with purely noise-initialized MCMC, high-quality short-run synthesis, and the same budget as ML with informative MCMC initialization such as CD or PCD. Unlike previous models, our model can obtain realistic high-diversity samples from a noise signal after training with no auxiliary networks. On the other hand, ConvNet potentials learned with highly non-convergent MCMC do not have a valid steady-state and cannot be considered approximate unnormalized densities of the training data because long-run MCMC samples differ greatly from observed images. We show that it is much harder to train a ConvNet potential to learn a steady-state over realistic images. To our knowledge, long-run MCMC samples of all previous models lose the realism of short-run samples. With correct tuning of Langevin noise, we train the first ConvNet potentials for which long-run and steady-state MCMC samples are realistic images.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge