On Exploration, Exploitation and Learning in Adaptive Importance Sampling

Paper and Code

Oct 31, 2018

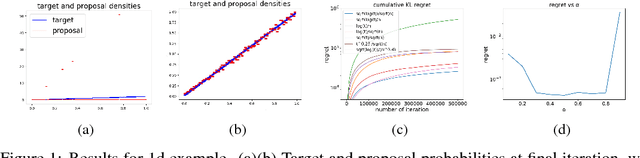

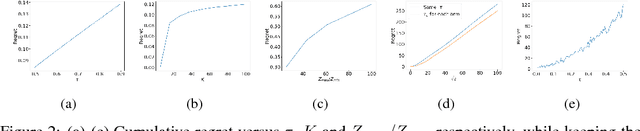

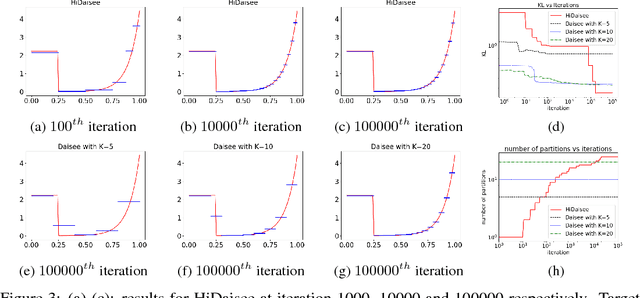

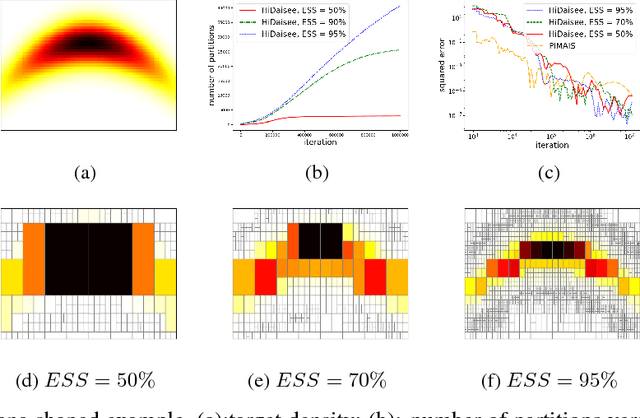

We study adaptive importance sampling (AIS) as an online learning problem and argue for the importance of the trade-off between exploration and exploitation in this adaptation. Borrowing ideas from the bandits literature, we propose Daisee, a partition-based AIS algorithm. We further introduce a notion of regret for AIS and show that Daisee has $\mathcal{O}(\sqrt{T}(\log T)^{\frac{3}{4}})$ cumulative pseudo-regret, where $T$ is the number of iterations. We then extend Daisee to adaptively learn a hierarchical partitioning of the sample space for more efficient sampling and confirm the performance of both algorithms empirically.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge