On Calibration and Out-of-domain Generalization

Paper and Code

Feb 20, 2021

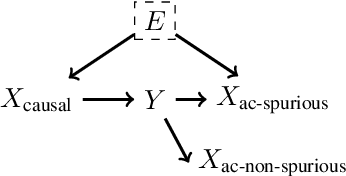

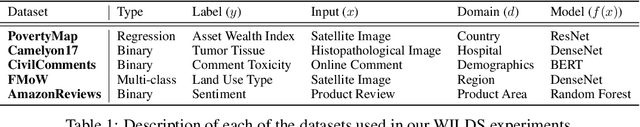

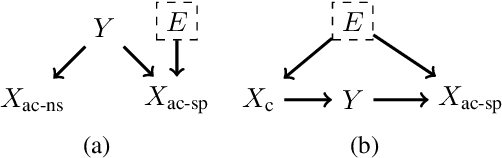

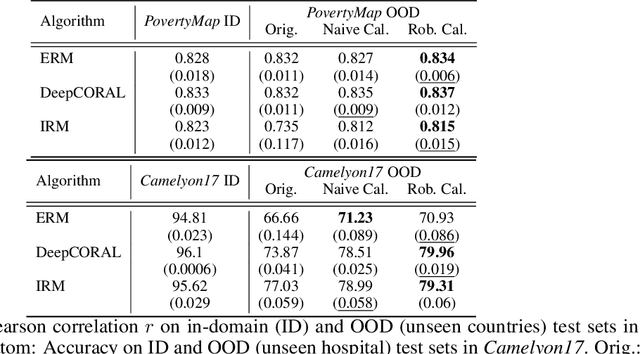

Out-of-domain (OOD) generalization is a significant challenge for machine learning models. To overcome it, many novel techniques have been proposed, often focused on learning models with certain invariance properties. In this work, we draw a link between OOD performance and model calibration, arguing that calibration across multiple domains can be viewed as a special case of an invariant representation leading to better OOD generalization. Specifically, we prove in a simplified setting that models which achieve multi-domain calibration are free of spurious correlations. This leads us to propose multi-domain calibration as a measurable surrogate for the OOD performance of a classifier. An important practical benefit of calibration is that there are many effective tools for calibrating classifiers. We show that these tools are easy to apply and adapt for a multi-domain setting. Using five datasets from the recently proposed WILDS OOD benchmark we demonstrate that simply re-calibrating models across multiple domains in a validation set leads to significantly improved performance on unseen test domains. We believe this intriguing connection between calibration and OOD generalization is promising from a practical point of view and deserves further research from a theoretical point of view.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge