Newton-type Methods for Minimax Optimization

Paper and Code

Jun 25, 2020

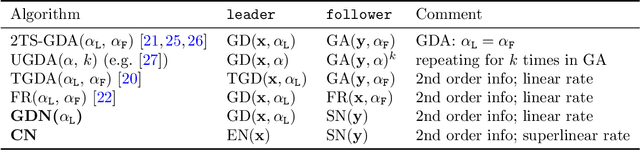

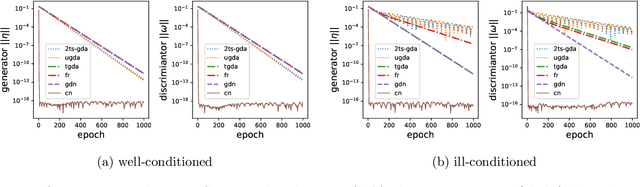

Differential games, in particular two-player sequential games (a.k.a. minimax optimization), have been an important modelling tool in applied science and received renewed interest in machine learning due to many recent applications. To account for the sequential and nonconvex nature, new solution concepts and algorithms have been developed. In this work, we provide a detailed analysis of existing algorithms and relate them to two novel Newton-type algorithms. We argue that our Newton-type algorithms nicely complement existing ones in that (a) they converge faster to (strict) local minimax points; (b) they are much more effective when the problem is ill-conditioned; (c) their computational complexity remains similar. We verify our theoretical results by conducting experiments on training GANs.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge