Neural Sequence Model Training via $α$-divergence Minimization

Paper and Code

Jun 30, 2017

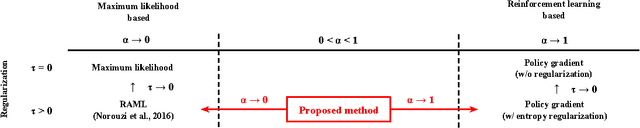

We propose a new neural sequence model training method in which the objective function is defined by $\alpha$-divergence. We demonstrate that the objective function generalizes the maximum-likelihood (ML)-based and reinforcement learning (RL)-based objective functions as special cases (i.e., ML corresponds to $\alpha \to 0$ and RL to $\alpha \to1$). We also show that the gradient of the objective function can be considered a mixture of ML- and RL-based objective gradients. The experimental results of a machine translation task show that minimizing the objective function with $\alpha > 0$ outperforms $\alpha \to 0$, which corresponds to ML-based methods.

* 2017 ICML Workshop on Learning to Generate Natural Language (LGNL

2017)

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge