Multi-View Subgraph Neural Networks: Self-Supervised Learning with Scarce Labeled Data

Paper and Code

Apr 19, 2024

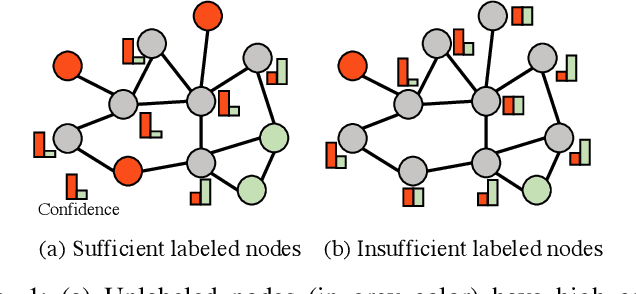

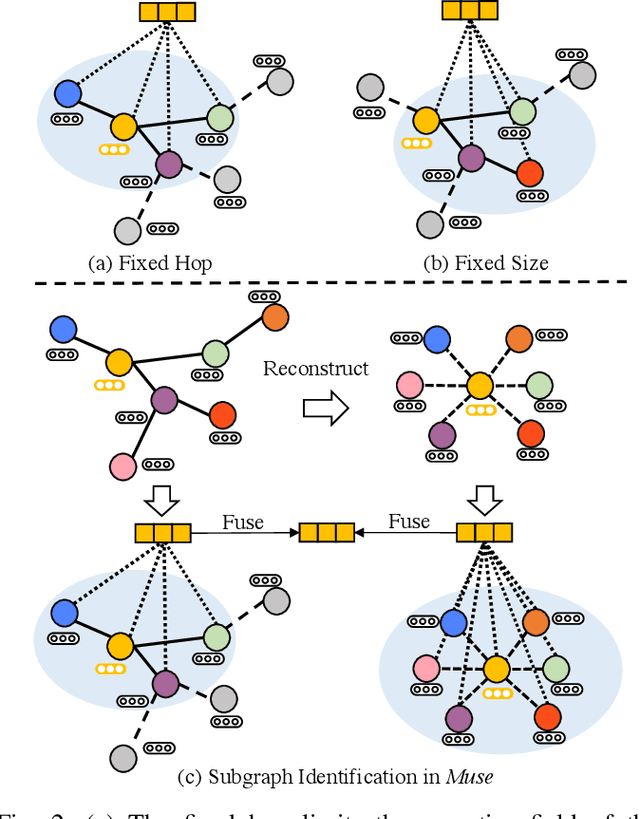

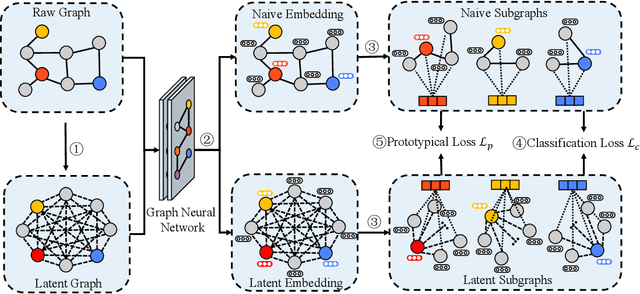

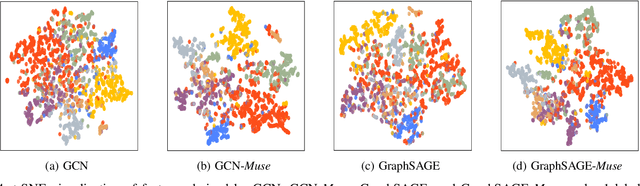

While graph neural networks (GNNs) have become the de-facto standard for graph-based node classification, they impose a strong assumption on the availability of sufficient labeled samples. This assumption restricts the classification performance of prevailing GNNs on many real-world applications suffering from low-data regimes. Specifically, features extracted from scarce labeled nodes could not provide sufficient supervision for the unlabeled samples, leading to severe over-fitting. In this work, we point out that leveraging subgraphs to capture long-range dependencies can augment the representation of a node with homophily properties, thus alleviating the low-data regime. However, prior works leveraging subgraphs fail to capture the long-range dependencies among nodes. To this end, we present a novel self-supervised learning framework, called multi-view subgraph neural networks (Muse), for handling long-range dependencies. In particular, we propose an information theory-based identification mechanism to identify two types of subgraphs from the views of input space and latent space, respectively. The former is to capture the local structure of the graph, while the latter captures the long-range dependencies among nodes. By fusing these two views of subgraphs, the learned representations can preserve the topological properties of the graph at large, including the local structure and long-range dependencies, thus maximizing their expressiveness for downstream node classification tasks. Experimental results show that Muse outperforms the alternative methods on node classification tasks with limited labeled data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge