Multi-Modal Diffusion for Hand-Object Grasp Generation

Paper and Code

Sep 06, 2024

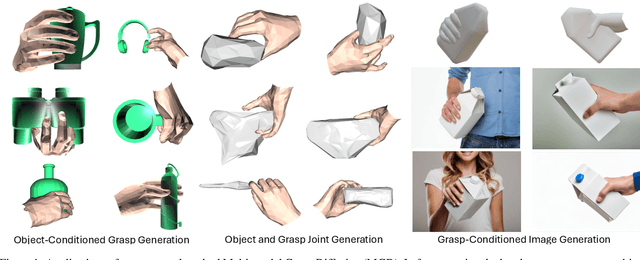

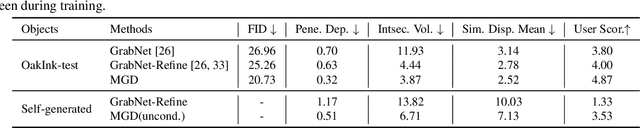

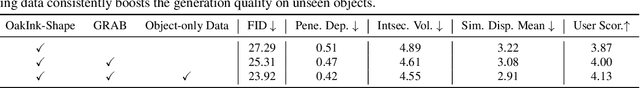

In this work, we focus on generating hand grasp over objects. Compared to previous works of generating hand poses with a given object, we aim to allow the generalization of both hand and object shapes by a single model. Our proposed method Multi-modal Grasp Diffusion (MGD) learns the prior and conditional posterior distribution of both modalities from heterogeneous data sources. Therefore it relieves the limitation of hand-object grasp datasets by leveraging the large-scale 3D object datasets. According to both qualitative and quantitative experiments, both conditional and unconditional generation of hand grasp achieve good visual plausibility and diversity. The proposed method also generalizes well to unseen object shapes. The code and weights will be available at \url{https://github.com/noahcao/mgd}.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge