MSN: Efficient Online Mask Selection Network for Video Instance Segmentation

Paper and Code

Jun 19, 2021

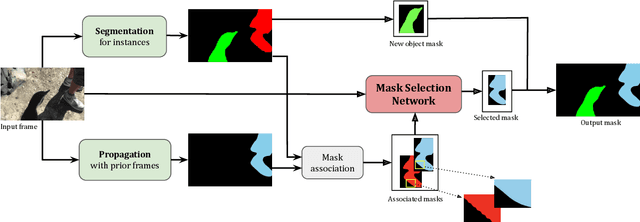

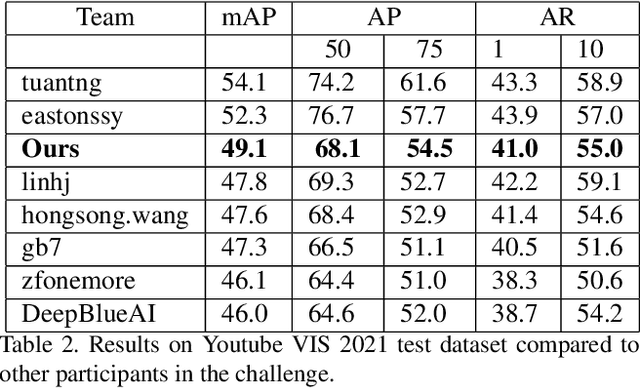

In this work we present a novel solution for Video Instance Segmentation(VIS), that is automatically generating instance level segmentation masks along with object class and tracking them in a video. Our method improves the masks from segmentation and propagation branches in an online manner using the Mask Selection Network (MSN) hence limiting the noise accumulation during mask tracking. We propose an effective design of MSN by using patch-based convolutional neural network. The network is able to distinguish between very subtle differences between the masks and choose the better masks out of the associated masks accurately. Further, we make use of temporal consistency and process the video sequences in both forward and reverse manner as a post processing step to recover lost objects. The proposed method can be used to adapt any video object segmentation method for the task of VIS. Our method achieves a score of 49.1 mAP on 2021 YouTube-VIS Challenge and was ranked third place among more than 30 global teams. Our code will be available at https://github.com/SHI-Labs/Mask-Selection-Networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge