Motion-aware Dynamic Graph Neural Network for Video Compressive Sensing

Paper and Code

Mar 01, 2022

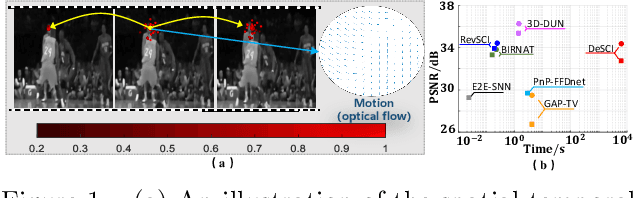

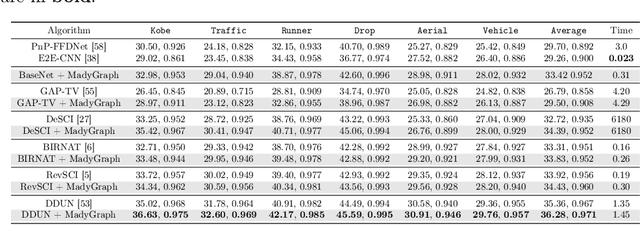

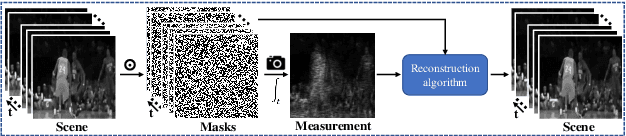

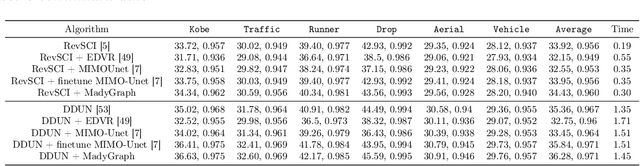

Video snapshot compressive imaging (SCI) utilizes a 2D detector to capture sequential video frames and compresses them into a single measurement. Various reconstruction methods have been developed to recover the high-speed video frames from the snapshot measurement. However, most existing reconstruction methods are incapable of capturing long-range spatial and temporal dependencies, which are critical for video processing. In this paper, we propose a flexible and robust approach based on graph neural network (GNN) to efficiently model non-local interactions between pixels in space as well as time regardless of the distance. Specifically, we develop a motion-aware dynamic GNN for better video representation, i.e., represent each pixel as the aggregation of relative nodes under the guidance of frame-by-frame motions, which consists of motion-aware dynamic sampling, cross-scale node sampling and graph aggregation. Extensive results on both simulation and real data demonstrate both the effectiveness and efficiency of the proposed approach, and the visualization clearly illustrates the intrinsic dynamic sampling operations of our proposed model for boosting the video SCI reconstruction results. The code and models will be released to the public.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge