Model-free Reinforcement Learning for Content Caching at the Wireless Edge via Restless Bandits

Paper and Code

Feb 26, 2022

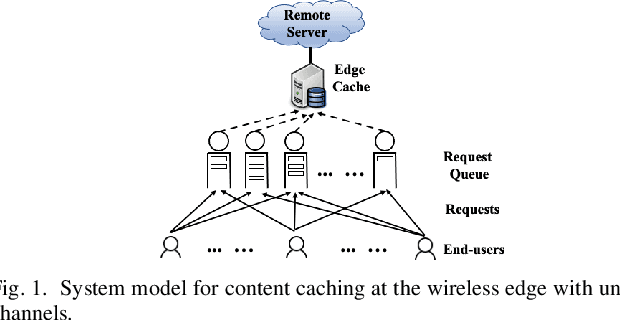

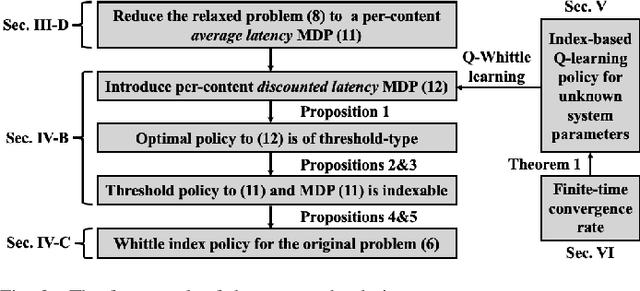

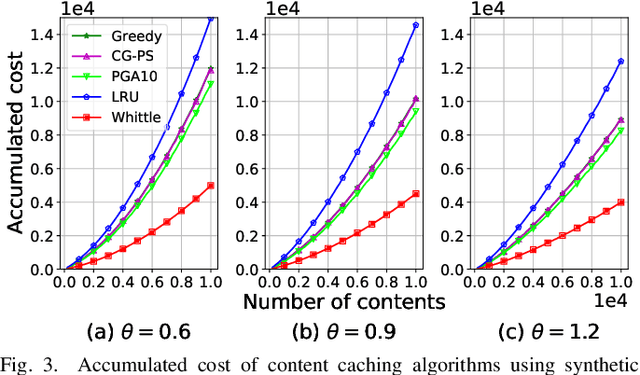

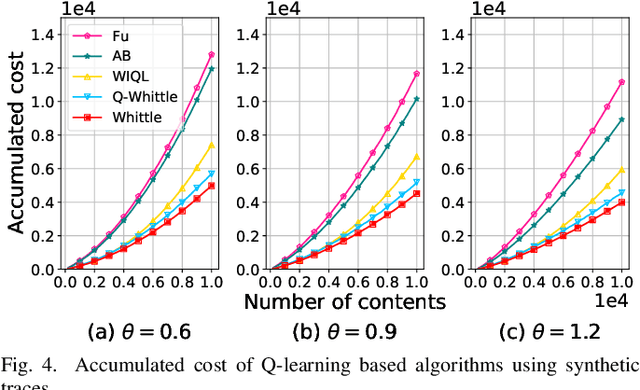

An explosive growth in the number of on-demand content requests has imposed significant pressure on current wireless network infrastructure. To enhance the perceived user experience, and support latency-sensitive applications, edge computing has emerged as a promising computing paradigm. The performance of a wireless edge depends on contents that are cached. In this paper, we consider the problem of content caching at the wireless edge with unreliable channels to minimize average content request latency. We formulate this problem as a restless bandit problem, which is provably hard to solve. We begin by investigating a discounted counterpart, and prove that it admits an optimal policy of the threshold-type. We then show that the result also holds for the average latency problem. Using these structural results, we establish the indexability of the problem, and employ Whittle index policy to minimize average latency. Since system parameters such as content request rate are often unknown, we further develop a model-free reinforcement learning algorithm dubbed Q-Whittle learning that relies on our index policy. We also derive a bound on its finite-time convergence rate. Simulation results using real traces demonstrate that our proposed algorithms yield excellent empirical performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge