Mitigating Performance Saturation in Neural Marked Point Processes: Architectures and Loss Functions

Paper and Code

Jul 07, 2021

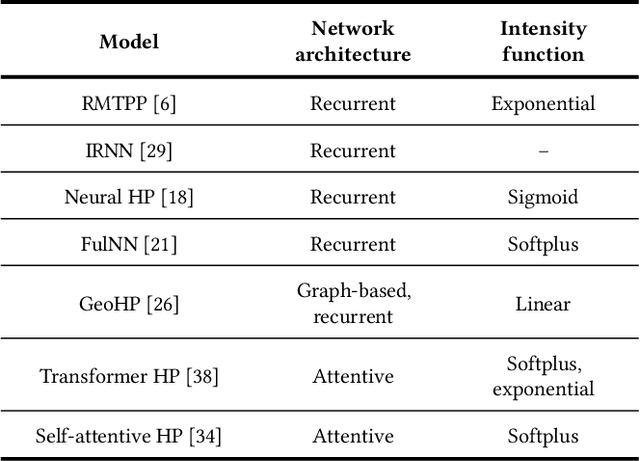

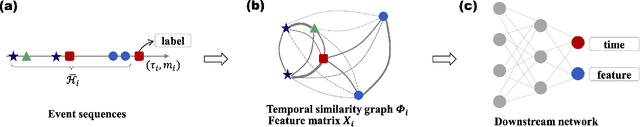

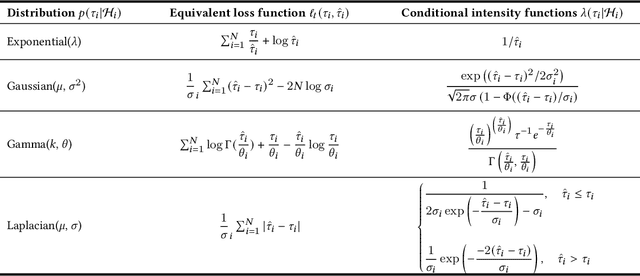

Attributed event sequences are commonly encountered in practice. A recent research line focuses on incorporating neural networks with the statistical model -- marked point processes, which is the conventional tool for dealing with attributed event sequences. Neural marked point processes possess good interpretability of probabilistic models as well as the representational power of neural networks. However, we find that performance of neural marked point processes is not always increasing as the network architecture becomes more complicated and larger, which is what we call the performance saturation phenomenon. This is due to the fact that the generalization error of neural marked point processes is determined by both the network representational ability and the model specification at the same time. Therefore we can draw two major conclusions: first, simple network structures can perform no worse than complicated ones for some cases; second, using a proper probabilistic assumption is as equally, if not more, important as improving the complexity of the network. Based on this observation, we propose a simple graph-based network structure called GCHP, which utilizes only graph convolutional layers, thus it can be easily accelerated by the parallel mechanism. We directly consider the distribution of interarrival times instead of imposing a specific assumption on the conditional intensity function, and propose to use a likelihood ratio loss with a moment matching mechanism for optimization and model selection. Experimental results show that GCHP can significantly reduce training time and the likelihood ratio loss with interarrival time probability assumptions can greatly improve the model performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge