MGDCF: Distance Learning via Markov Graph Diffusion for Neural Collaborative Filtering

Paper and Code

Apr 05, 2022

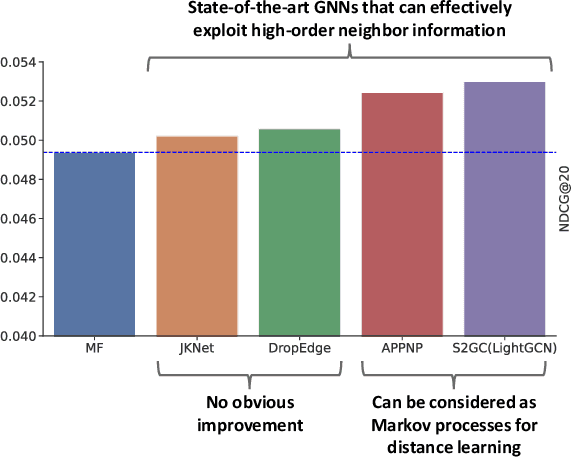

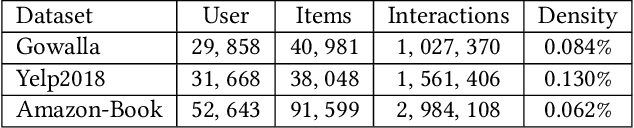

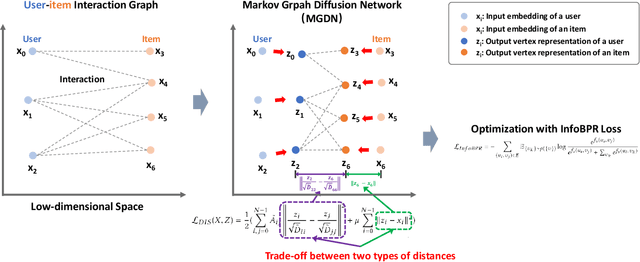

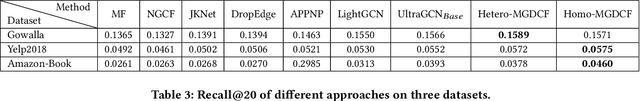

Collaborative filtering (CF) is widely used by personalized recommendation systems, which aims to predict the preference of users with historical user-item interactions. In recent years, Graph Neural Networks (GNNs) have been utilized to build CF models and have shown promising performance. Recent state-of-the-art GNN-based CF approaches simply attribute their performance improvement to the high-order neighbor aggregation ability of GNNs. However, we observe that some powerful deep GNNs such as JKNet and DropEdge, can effectively exploit high-order neighbor information on other graph tasks but perform poorly on CF tasks, which conflicts with the explanation of these GNN-based CF research. Different from these research, we investigate the GNN-based CF from the perspective of Markov processes for distance learning with a unified framework named Markov Graph Diffusion Collaborative Filtering (MGDCF). We design a Markov Graph Diffusion Network (MGDN) as MGDCF's GNN encoder, which learns vertex representations by trading off two types of distances via a Markov process. We show the theoretical equivalence between MGDN's output and the optimal solution of a distance loss function, which can boost the optimization of CF models. MGDN can generalize state-of-the-art models such as LightGCN and APPNP, which are heterogeneous GNNs. In addition, MGDN can be extended to homogeneous GNNs with our sparsification technique. For optimizing MGDCF, we propose the InfoBPR loss function, which extends the widely used BPR loss to exploit multiple negative samples for better performance. We conduct experiments to perform detailed analysis on MGDCF. The source code is publicly available at https://github.com/hujunxianligong/MGDCF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge