Meta-Learning with Sparse Experience Replay for Lifelong Language Learning

Paper and Code

Sep 10, 2020

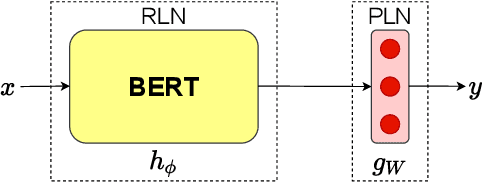

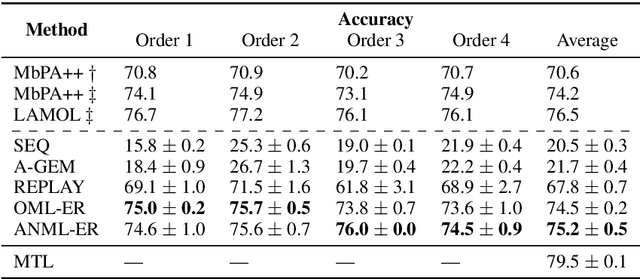

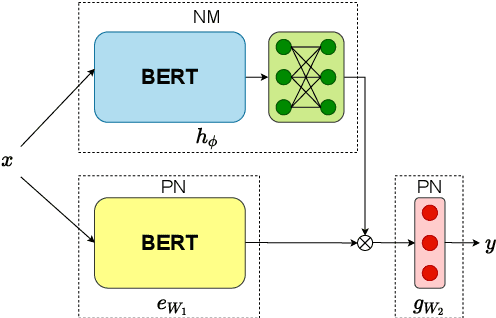

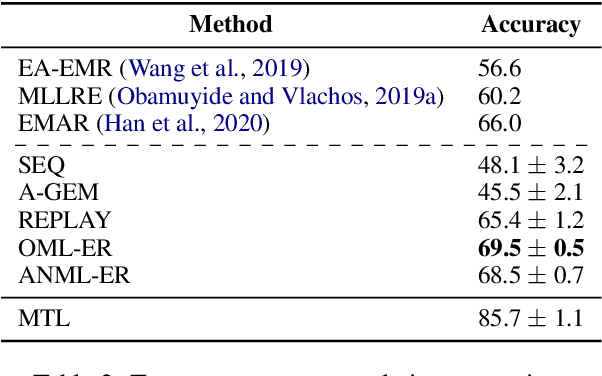

Lifelong learning requires models that can continuously learn from sequential streams of data without suffering catastrophic forgetting due to shifts in data distributions. Deep learning models have thrived in the non-sequential learning paradigm; however, when used to learn a sequence of tasks, they fail to retain past knowledge and learn incrementally. We propose a novel approach to lifelong learning of language tasks based on meta-learning with sparse experience replay that directly optimizes to prevent forgetting. We show that under the realistic setting of performing a single pass on a stream of tasks and without any task identifiers, our method obtains state-of-the-art results on lifelong text classification and relation extraction. We analyze the effectiveness of our approach and further demonstrate its low computational and space complexity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge