Memory-adaptive Depth-wise Heterogenous Federated Learning

Paper and Code

Mar 08, 2023

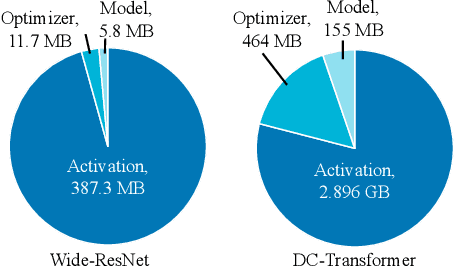

Federated learning is a promising paradigm that allows multiple clients to collaboratively train a model without sharing the local data. However, the presence of heterogeneous devices in federated learning, such as mobile phones and IoT devices with varying memory capabilities, would limit the scale and hence the performance of the model could be trained. The mainstream approaches to address memory limitations focus on width-slimming techniques, where different clients train subnetworks with reduced widths locally and then the server aggregates the subnetworks. The global model produced from these methods suffers from performance degradation due to the negative impact of the actions taken to handle the varying subnetwork widths in the aggregation phase. In this paper, we introduce a memory-adaptive depth-wise learning solution in FL called FeDepth, which adaptively decomposes the full model into blocks according to the memory budgets of each client and trains blocks sequentially to obtain a full inference model. Our method outperforms state-of-the-art approaches, achieving 5% and more than 10% improvements in top-1 accuracy on CIFAR-10 and CIFAR-100, respectively. We also demonstrate the effectiveness of depth-wise fine-tuning on ViT. Our findings highlight the importance of memory-aware techniques for federated learning with heterogeneous devices and the success of depth-wise training strategy in improving the global model's performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge