MaskRange: A Mask-classification Model for Range-view based LiDAR Segmentation

Paper and Code

Jun 24, 2022

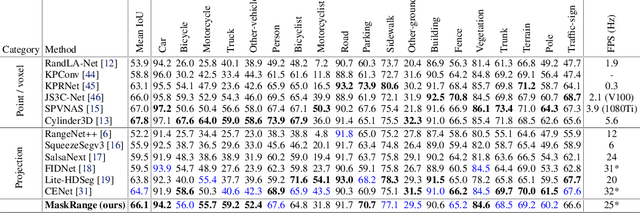

Range-view based LiDAR segmentation methods are attractive for practical applications due to their direct inheritance from efficient 2D CNN architectures. In literature, most range-view based methods follow the per-pixel classification paradigm. Recently, in the image segmentation domain, another paradigm formulates segmentation as a mask-classification problem and has achieved remarkable performance. This raises an interesting question: can the mask-classification paradigm benefit the range-view based LiDAR segmentation and achieve better performance than the counterpart per-pixel paradigm? To answer this question, we propose a unified mask-classification model, MaskRange, for the range-view based LiDAR semantic and panoptic segmentation. Along with the new paradigm, we also propose a novel data augmentation method to deal with overfitting, context-reliance, and class-imbalance problems. Extensive experiments are conducted on the SemanticKITTI benchmark. Among all published range-view based methods, our MaskRange achieves state-of-the-art performance with $66.10$ mIoU on semantic segmentation and promising results with $53.10$ PQ on panoptic segmentation with high efficiency. Our code will be released.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge