LumiPath - Towards Real-time Physically-based Rendering on Embedded Devices

Paper and Code

Mar 09, 2019

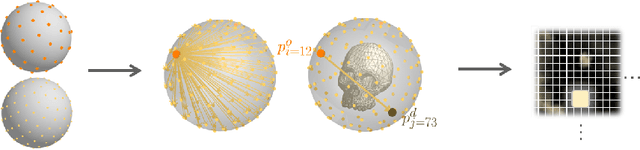

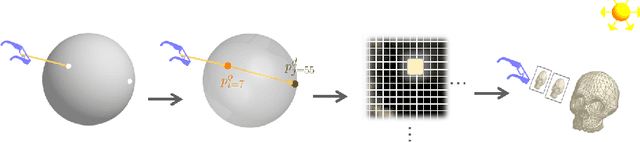

As the computational power of toady's devices increases, real-time physically-based rendering becomes possible, and is rapidly gaining attention across a variety of domains. These include gaming, where physically-based rendering enhances immersion and overall entertainment experience, all the way to medicine, where it constitutes a powerful tool for intuitive volumetric data visualization. However, leveraging the obvious benefits of physically-based rendering (also referred to as photo-realistic rendering) remains challenging on embedded devices such as optical see-through head-mounted displays because of their limited computational power, and restricted memory usage and power consumption. We propose methods that aim at overcoming these limitations, fueling the implementation of real-time physically-based rendering on embedded devices. We navigate the compromise between memory requirement, computational power, and image quality to achieve reasonable rendering results by introducing a flexible representation of plenoptic functions and adapting a fast approximation algorithm for image generation from our plenoptic functions. We conclude by discussing potential applications and limitations of the proposed method.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge