Learning the Roots of Visual Domain Shift

Paper and Code

Jul 20, 2016

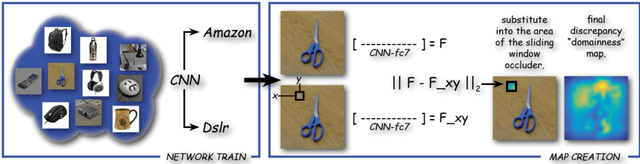

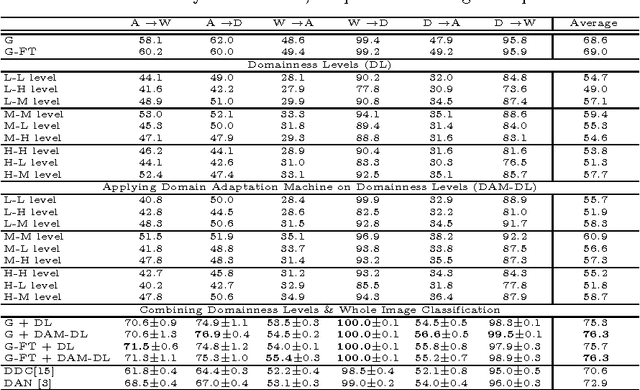

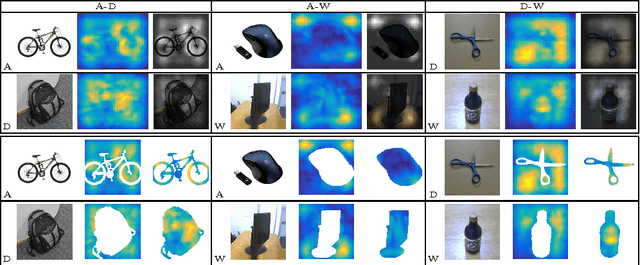

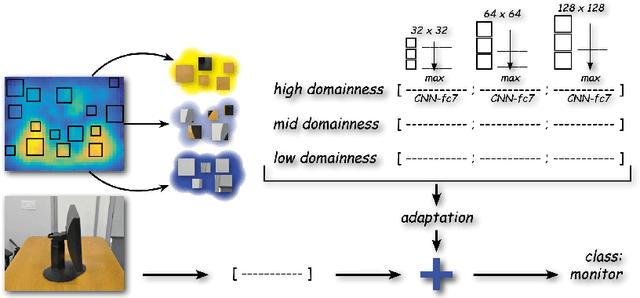

In this paper we focus on the spatial nature of visual domain shift, attempting to learn where domain adaptation originates in each given image of the source and target set. We borrow concepts and techniques from the CNN visualization literature, and learn domainnes maps able to localize the degree of domain specificity in images. We derive from these maps features related to different domainnes levels, and we show that by considering them as a preprocessing step for a domain adaptation algorithm, the final classification performance is strongly improved. Combined with the whole image representation, these features provide state of the art results on the Office dataset.

* Extended Abstract

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge