Learning Regularized Multi-Scale Feature Flow for High Dynamic Range Imaging

Paper and Code

Jul 06, 2022

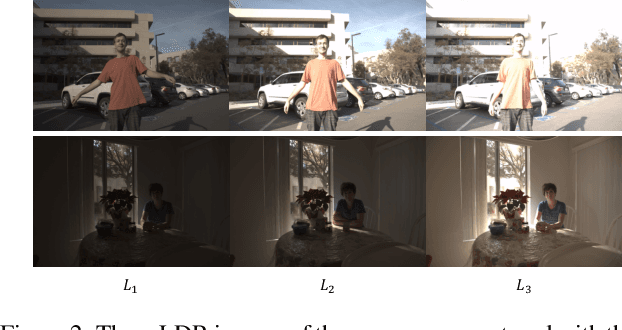

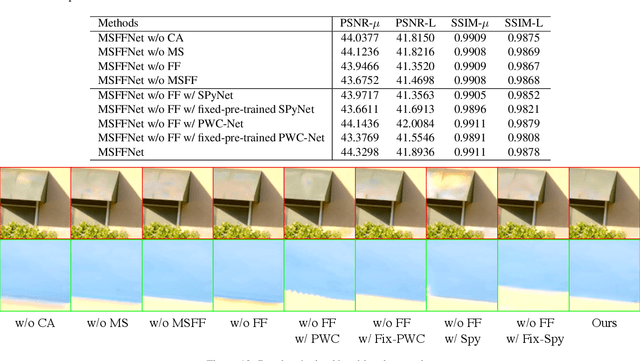

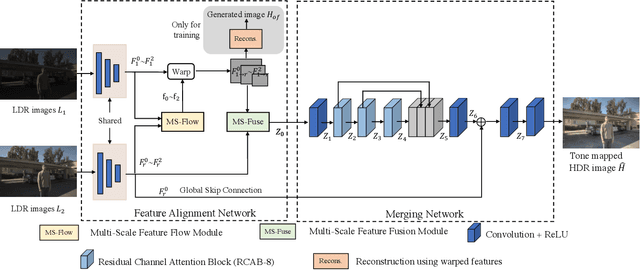

Reconstructing ghosting-free high dynamic range (HDR) images of dynamic scenes from a set of multi-exposure images is a challenging task, especially with large object motion and occlusions, leading to visible artifacts using existing methods. To address this problem, we propose a deep network that tries to learn multi-scale feature flow guided by the regularized loss. It first extracts multi-scale features and then aligns features from non-reference images. After alignment, we use residual channel attention blocks to merge the features from different images. Extensive qualitative and quantitative comparisons show that our approach achieves state-of-the-art performance and produces excellent results where color artifacts and geometric distortions are significantly reduced.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge