Learning Postural Synergies for Categorical Grasping through Shape Space Registration

Paper and Code

Oct 18, 2018

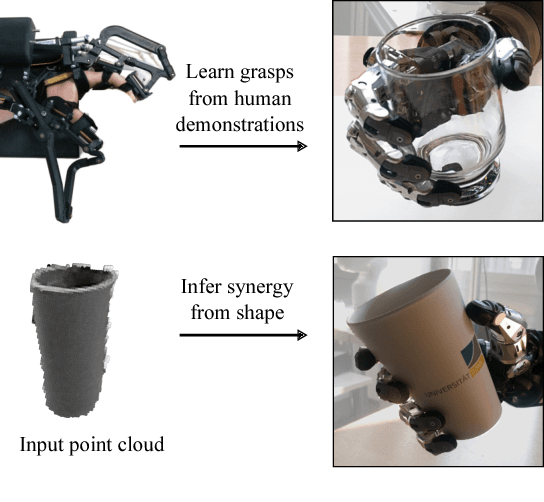

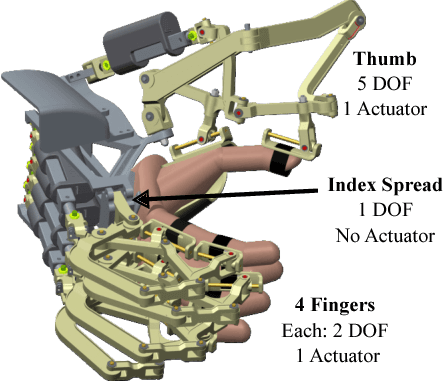

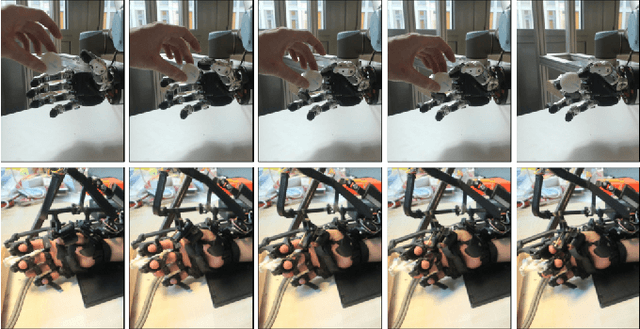

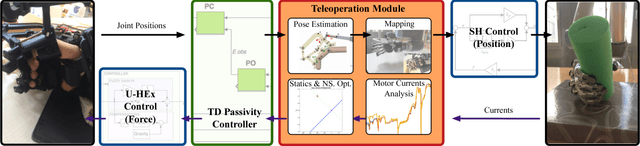

Every time a person encounters an object with a given degree of familiarity, he/she immediately knows how to grasp it. Adaptation of the movement of the hand according to the object geometry happens effortlessly because of the accumulated knowledge of previous experiences grasping similar objects. In this paper, we present a novel method for inferring grasp configurations based on the object shape. Grasping knowledge is gathered in a synergy space of the robotic hand built by following a human grasping taxonomy. The synergy space is constructed through human demonstrations employing a exoskeleton that provides force feedback, which provides the advantage of evaluating the quality of the grasp. The shape descriptor is obtained by means of a categorical non-rigid registration that encodes typical intra-class variations. This approach is especially suitable for on-line scenarios where only a portion of the object's surface is observable. This method is demonstrated through simulation and real robot experiments by grasping objects never seen before by the robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge