Learning Dynamic MRI Reconstruction with Convolutional Network Assisted Reconstruction Swin Transformer

Paper and Code

Sep 19, 2023

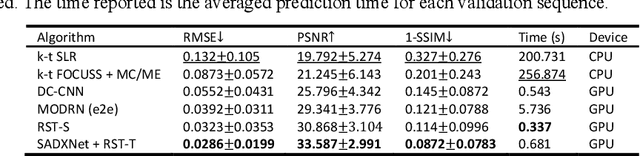

Dynamic magnetic resonance imaging (DMRI) is an effective imaging tool for diagnosis tasks that require motion tracking of a certain anatomy. To speed up DMRI acquisition, k-space measurements are commonly undersampled along spatial or spatial-temporal domains. The difficulty of recovering useful information increases with increasing undersampling ratios. Compress sensing was invented for this purpose and has become the most popular method until deep learning (DL) based DMRI reconstruction methods emerged in the past decade. Nevertheless, existing DL networks are still limited in long-range sequential dependency understanding and computational efficiency and are not fully automated. Considering the success of Transformers positional embedding and "swin window" self-attention mechanism in the vision community, especially natural video understanding, we hereby propose a novel architecture named Reconstruction Swin Transformer (RST) for 4D MRI. RST inherits the backbone design of the Video Swin Transformer with a novel reconstruction head introduced to restore pixel-wise intensity. A convolution network called SADXNet is used for rapid initialization of 2D MR frames before RST learning to effectively reduce the model complexity, GPU hardware demand, and training time. Experimental results in the cardiac 4D MR dataset further substantiate the superiority of RST, achieving the lowest RMSE of 0.0286 +/- 0.0199 and 1 - SSIM of 0.0872 +/- 0.0783 on 9 times accelerated validation sequences.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge