Learning Counterfactually Invariant Predictors

Paper and Code

Jul 20, 2022

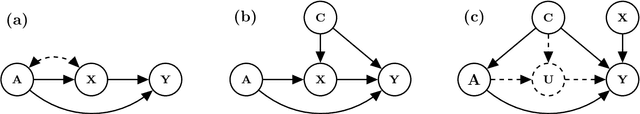

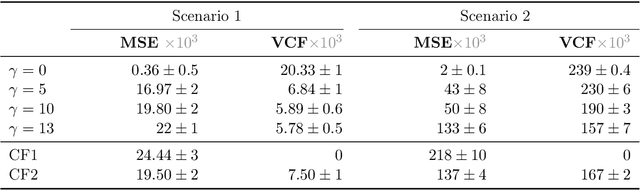

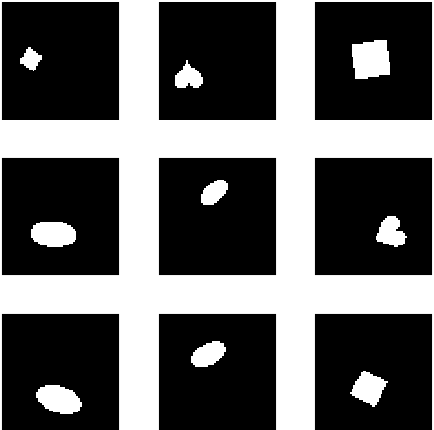

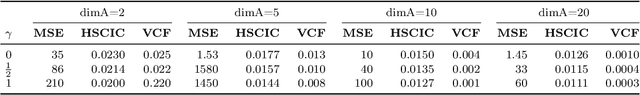

We propose a method to learn predictors that are invariant under counterfactual changes of certain covariates. This method is useful when the prediction target is causally influenced by covariates that should not affect the predictor output. For instance, an object recognition model may be influenced by position, orientation, or scale of the object itself. We address the problem of training predictors that are explicitly counterfactually invariant to changes of such covariates. We propose a model-agnostic regularization term based on conditional kernel mean embeddings, to enforce counterfactual invariance during training. We prove the soundness of our method, which can handle mixed categorical and continuous multi-variate attributes. Empirical results on synthetic and real-world data demonstrate the efficacy of our method in a variety of settings.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge