Learning Controls Using Cross-Modal Representations: Bridging Simulation and Reality for Drone Racing

Paper and Code

Sep 16, 2019

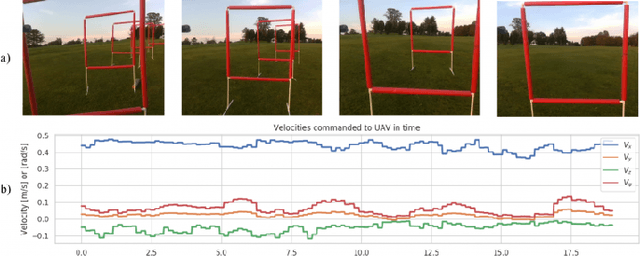

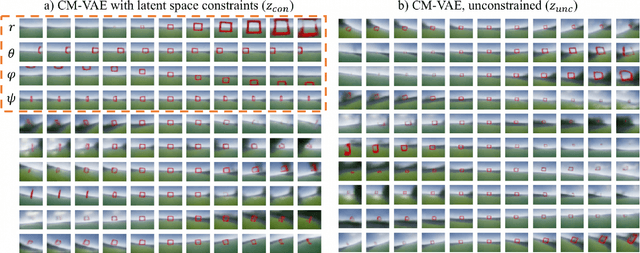

Machines are a long way from robustly solving open-world perception-control tasks, such as first-person view (FPV) drone racing. While recent advances in Machine Learning, especially Reinforcement and Imitation Learning show promise, they are constrained by the need of large amounts of difficult to collect real-world data for learning robust behaviors in diverse scenarios. In this work we propose to learn rich representations and policies by leveraging unsupervised data, such as video footage from an FPV drone, together with easy to generate simulated labeled data. Our approach takes a cross-modal perspective, where separate modalities correspond to the raw camera sensor data and the system states relevant to the task, such as the relative pose gates to the UAV. We fuse both data modalities into a novel factored architecture that learns a joint low-dimensional representation via Variational Auto Encoders. Such joint representations allow us to leverage rich labeled information from simulations together with the diversity of possible experiences via the unsupervised real-world data. We present experiments in simulation that provide insights into the rich latent spaces learned with our proposed representations, and also show that the use of our cross-modal architecture improves control policy performance in over 5X in comparison with end-to-end learning or purely unsupervised feature extractors. Finally, we present real-life results for drone navigation, showing that the learned representations and policies can generalize across simulation and reality.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge