Learn to Resolve Conversational Dependency: A Consistency Training Framework for Conversational Question Answering

Paper and Code

Jun 22, 2021

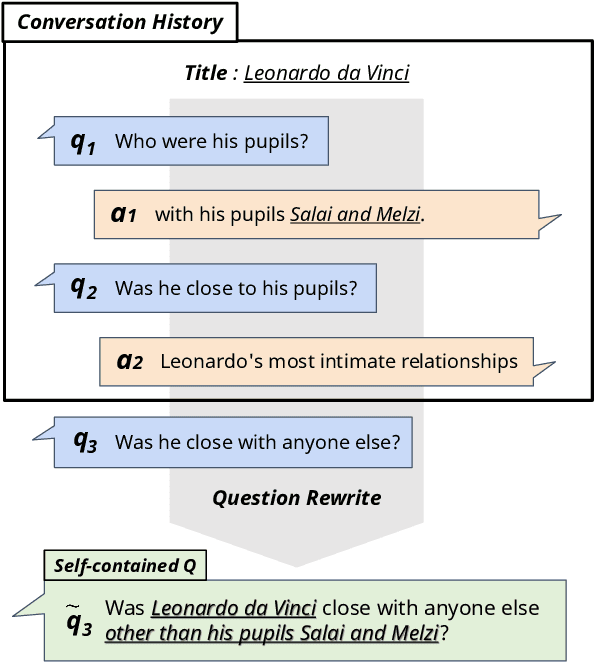

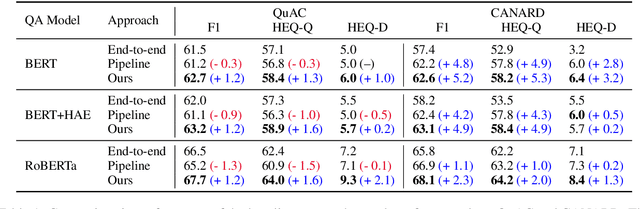

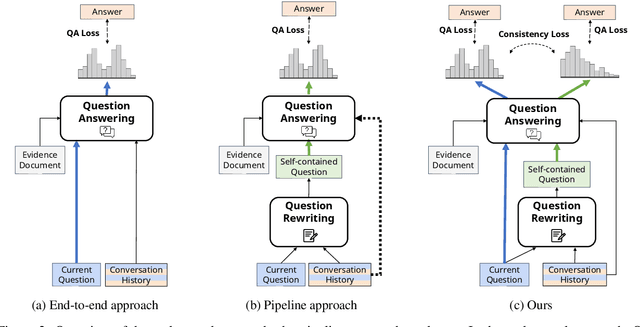

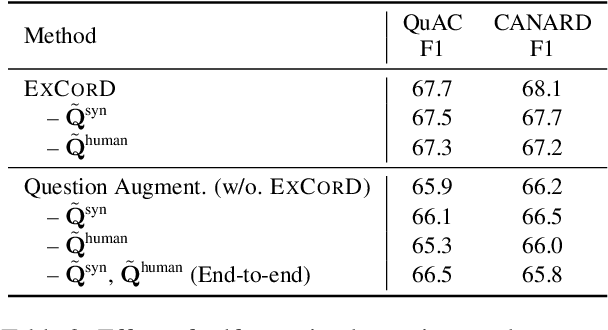

One of the main challenges in conversational question answering (CQA) is to resolve the conversational dependency, such as anaphora and ellipsis. However, existing approaches do not explicitly train QA models on how to resolve the dependency, and thus these models are limited in understanding human dialogues. In this paper, we propose a novel framework, ExCorD (Explicit guidance on how to resolve Conversational Dependency) to enhance the abilities of QA models in comprehending conversational context. ExCorD first generates self-contained questions that can be understood without the conversation history, then trains a QA model with the pairs of original and self-contained questions using a consistency-based regularizer. In our experiments, we demonstrate that ExCorD significantly improves the QA models' performance by up to 1.2 F1 on QuAC, and 5.2 F1 on CANARD, while addressing the limitations of the existing approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge