Large-Scale Joint Topic, Sentiment & User Preference Analysis for Online Reviews

Paper and Code

Jan 14, 2019

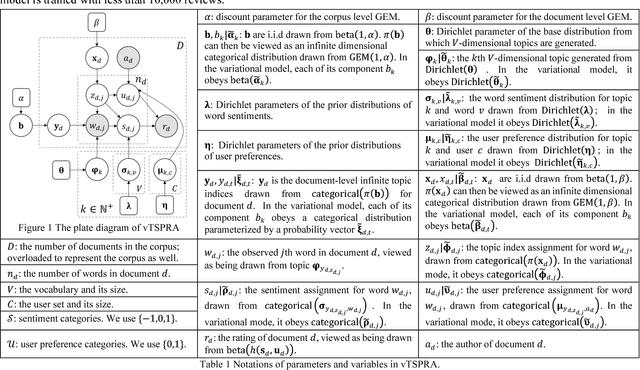

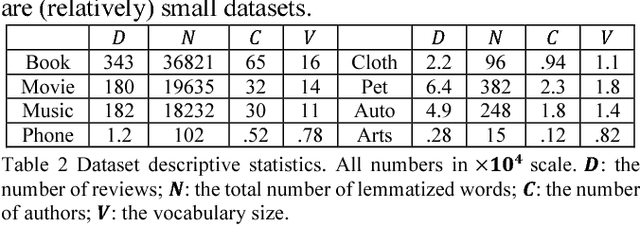

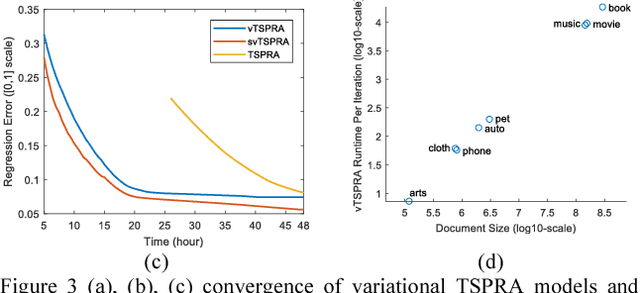

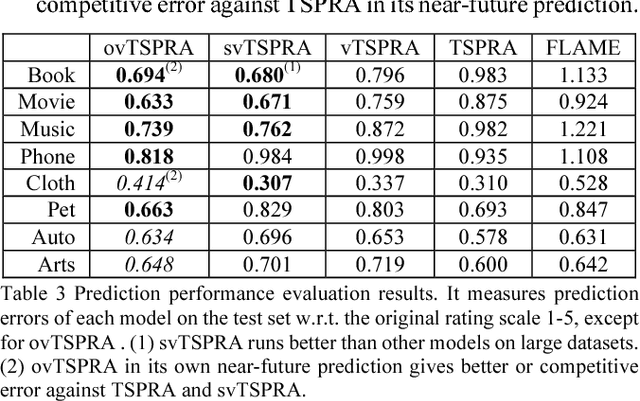

This paper presents a non-trivial reconstruction of a previous joint topic-sentiment-preference review model TSPRA with stick-breaking representation under the framework of variational inference (VI) and stochastic variational inference (SVI). TSPRA is a Gibbs Sampling based model that solves topics, word sentiments and user preferences altogether and has been shown to achieve good performance, but for large data set it can only learn from a relatively small sample. We develop the variational models vTSPRA and svTSPRA to improve the time use, and our new approach is capable of processing millions of reviews. We rebuild the generative process, improve the rating regression, solve and present the coordinate-ascent updates of variational parameters, and show the time complexity of each iteration is theoretically linear to the corpus size, and the experiments on Amazon data sets show it converges faster than TSPRA and attains better results given the same amount of time. In addition, we tune svTSPRA into an online algorithm ovTSPRA that can monitor oscillations of sentiment and preference overtime. Some interesting fluctuations are captured and possible explanations are provided. The results give strong visual evidence that user preference is better treated as an independent factor from sentiment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge