KGBoost: A Classification-based Knowledge Base Completion Method with Negative Sampling

Paper and Code

Dec 17, 2021

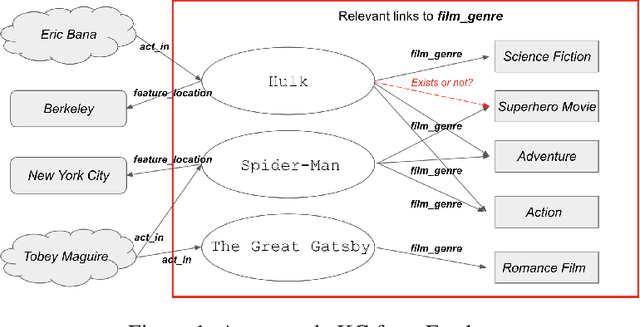

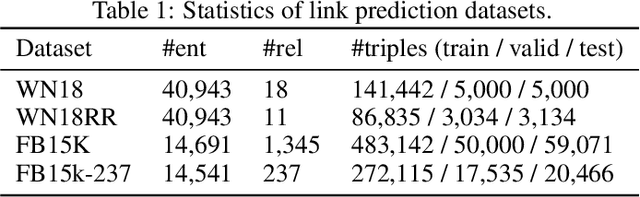

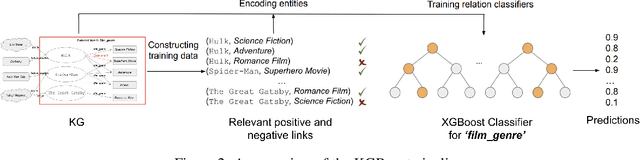

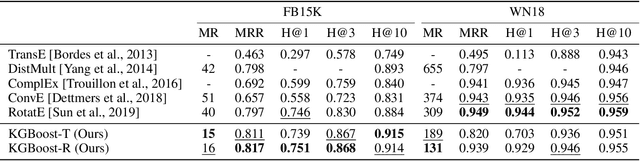

Knowledge base completion is formulated as a binary classification problem in this work, where an XGBoost binary classifier is trained for each relation using relevant links in knowledge graphs (KGs). The new method, named KGBoost, adopts a modularized design and attempts to find hard negative samples so as to train a powerful classifier for missing link prediction. We conduct experiments on multiple benchmark datasets, and demonstrate that KGBoost outperforms state-of-the-art methods across most datasets. Furthermore, as compared with models trained by end-to-end optimization, KGBoost works well under the low-dimensional setting so as to allow a smaller model size.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge