Interpretable to Whom? A Role-based Model for Analyzing Interpretable Machine Learning Systems

Paper and Code

Jun 20, 2018

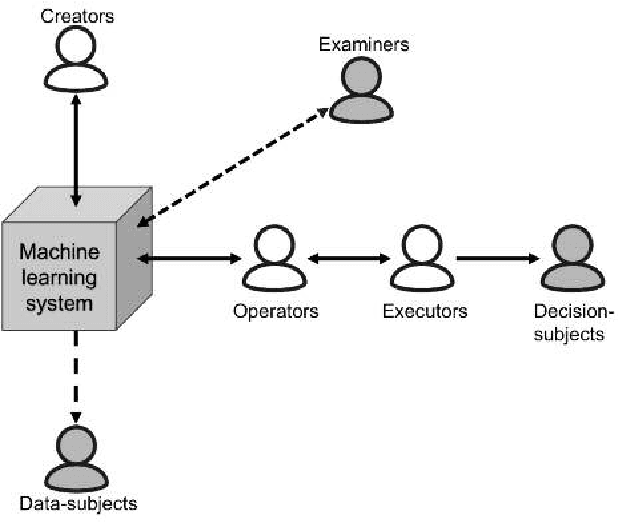

Several researchers have argued that a machine learning system's interpretability should be defined in relation to a specific agent or task: we should not ask if the system is interpretable, but to whom is it interpretable. We describe a model intended to help answer this question, by identifying different roles that agents can fulfill in relation to the machine learning system. We illustrate the use of our model in a variety of scenarios, exploring how an agent's role influences its goals, and the implications for defining interpretability. Finally, we make suggestions for how our model could be useful to interpretability researchers, system developers, and regulatory bodies auditing machine learning systems.

* presented at 2018 ICML Workshop on Human Interpretability in Machine

Learning (WHI 2018), Stockholm, Sweden

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge